![]() Lets look at exactly what Intel is launching today, there are a ton of chips, SKUs, boards, boxes, and accessories. They all revolve around Sandy Bridge-EP, the two socket, 8-core, 16-thread server platform called Romley.

Lets look at exactly what Intel is launching today, there are a ton of chips, SKUs, boards, boxes, and accessories. They all revolve around Sandy Bridge-EP, the two socket, 8-core, 16-thread server platform called Romley.

Romley is the successor to the Westmere-EP based Gulftown, the two socket server platform that uses the Xeon 5600 line of CPUs. Westemere, and its predecessor Nehalem, shared an architecture, but Romley is the first real server platform to use the new Sandy Bridge architecture found in desktop CPUs since early 2011. It is radically different and vastly more capable, but for now, we will just stick with what you can buy.

There is only one design for the entire E5-2600 line, but most have some features capped or turned off to suit different price points, market needs, and performance scenarios. There are times when turning something off pays dividends to the overall workload, but in general, it is used for marketing reasons. That said, there are enough features to make almost anything in this line a better choice than the older Xeons.

The 2 in 2600 signifies 2-socket, and while there is a 4-socket variant on some slides seen by SemiAccurate, it does not officially exist yet. For the overwhelming majority though, the 2-socket Romley will be more than powerful enough to meet the needs of most workloads. The core count is also upped from the previous maximum of six to eight, with Hyperthreading (HT), a single two socket server now has 16 threads.

One problem with that many threads is feeding them, so the L3 cache is increased to 20MB from Westmere’s 12MB or 2.5MB per core instead of 2MB. This is backed up by four DDR3 channels that each support DDR3/1600 with up to two dimms per channel, three drops speed to 1333MHz. This is a massive step up from the previous three channels at DDR3/1333 with one DIMM per channel or three DIMMs per channel at DDR3/800. Bandwidth and total capacity grew more than the raw core count, and that bottleneck is pushed farther into the distance.

If two DIMMs at 1600MHz per channel isn’t enough, Romley supports Load Reduced (LR) DIMMs. We have covered that technology extensively in the past, specifically the Inphi buffer that makes it possible. While others may hit the market soon, Inphi is the only choice for now, and it appears to be quite a good one. LR-DIMMs essentially fake the rank count on a stick of memory and allow you to put in more physical sticks of memory at higher capacities per stick.

While the technology isn’t free, if you need the capacity, it is literally the only way you can get there. In addition to the added expense, LR-DIMMs have two relatively minor drawbacks, one added clock cycle of latency and a bit of power used by the buffer itself. The latency is so minor it is hard to measure on real workloads, especially with such a large cache. If you think about it, one clock cycle of latency vs a 333MHz speed drop and/or half the total capacity is a no-brainer of a choice.

The end result is that LR-DIMMs are a clear win if you need them, but they aren’t cheap. Since they enable some companies to tackle workloads that simply can not be done without the added capacity, it is less a question of cost than you might think. The added server capacity, not to mention time, needed to make up for memory swap slowdowns will dwarf the cost of LR-DIMMs, if, and only if, you need that capacity.

From a user perspective, the last big advance is a new line of 10GigE network cards. Intel has 10GigE chips available as both Lan on Motherboad (LOM) or discrete card, and unlike many previous platforms, has core level changes to keep the CPU from becoming a bottleneck. Much of this is due to the Data Direct I/O (DDIO) mentioned in the last part of the series, and it is truly a game changer. From the perspective of the customer, 10GigE just works and doesn’t stutter, choke, or lose frames. Romley is potentially the first platform to do 10GigE the right way out of the box.

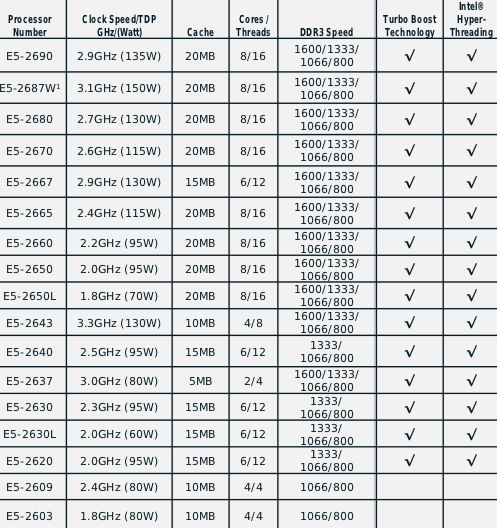

So, with all of these features, when can you get one? Now, or if you are a big customer, you probably have them already. As we mentioned in the first article in the series, you can use one right now, and you are doing it as we speak. This article, and all of SemiAccurate, is being served with a pair of E5-2600 chips. The E5-2600 line has a lot of SKUs, 17 to be specific, with a lot of different variations.

No market niche left unexploited

Core count ranges from 2 to 8, L3 cache from 5MB to 20MB, and threads from 4 to 16, with all but the lowest two SKUs getting to that number via HT and Turbo. Power ranges from the low voltage L-suffix 60W variants to the frequency optimized 150W W-suffix parts. Similarly, Turbo will boost speeds from no boost to a maximum nine bins (900 MHz) for a single active core, depending on SKU, less with more active cores. With all eight cores active, the maximum boost is between zero and 500MHz, but the highest speed never exceeds 3.9GHz on any part at any time regardless of cores, turbo, or moon phase.

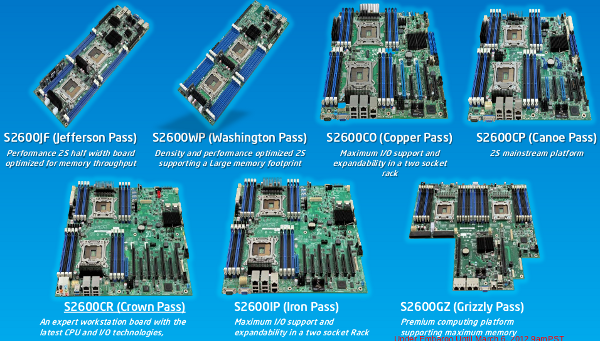

For reference designs, Intel has seven initial board offerings, all of which start with S2600. They have two sockets but can vary in DIMMs per channel, I/O slots, ethernet ports, upgrade slots, SAS availability, and general upgrade-ability. There are three basic form factors, two are sub-1U micro-servers, four standard server boards, and one rack optimized version. SemiAccurate is, as of this article, running on a S2600GZ (Grizzly Pass) board. The family looks like this.

The new kids on the block

Those boards fit in to three reference chassis, a microserver housing, a tower, and a 2U rack mount platform. They are called H2000, P4000, and R2000 respectively, and take up 2, 4 and 2U of rack space. SemiAccurate’s S2600GZ is in an R2000 chassis at the moment. To top all of this off, there are several NIC, storage, and I/O modules, the possible combinations are pretty staggering.

In the end, Intel is claiming an 80% increase in performance for E5-2600 over the previous Xeon 5600 line. From our experiences using it to power the server you are reading this on, it is an amazingly capable and powerful product line. It was a solid and worthy upgrade to the rather dated machines we were using in the past, and it would undoubtedly be worth the upgrade from any box that old. In fact, you could make a solid case for upgrading the next two generations after that, and even for upgrading a 5600. Any accounting department worth the bad reputation would probably scream bloody ROI tinged epithets at the mention of it, so pick your battles wisely.

While we can recommend almost any of these chips that fit your price point, we unfortunately can’t bring you the actual prices yet. Intel has traditionally priced new server chips in line with the ones they replace, so that is a pretty good ballpark estimate. We will bring you pricing as soon as we get it, hopefully in the very near future.

Now you know what Intel launched today, a little bit about the user facing features they support. While gushing about performance has its place, the more interesting question is how Sandy Bridge-EP achieves its seeming magic. In the next few instalments, we will look at facets of the technology in depth, and how they work together. By the time that is done, we will probably have a much better set of test results to share with you on the exact performance of the machine. Stay tuned, and keep clicking, you are doing the testing as we speak.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024

- Why is there an Altera FPGA on QTS Birch Stream boards? - Mar 12, 2024

- Doogee (Almost) makes the phone we always wanted - Mar 11, 2024