Do to the length of our testing we’ve broken this review into two separate articles. In this half of the review we will be continuing our testing of the graphical capabilities of Intel’s HD 4000. You can find the first half of the review here. Now on with the reviewing!

Crysis

With the next game in our suite we aimed to answer well-worn trope, “but can it play Crysis?”

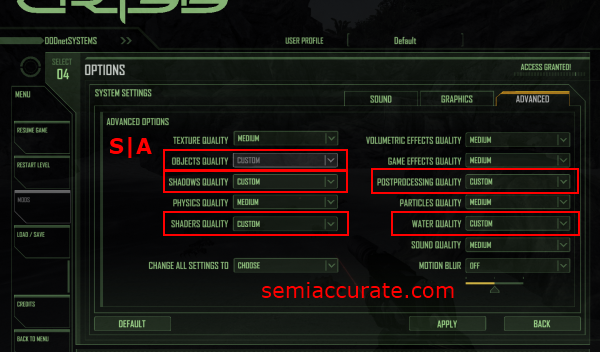

And in this case the answer is yes, and at 1080P. Crytek’s Crysis did turn out to be a bit of an oddity though, due to the game opting to use its own “custom” settings when running on the HD 4000. I was getting around 40 frames per second on the low pre-set, and about 12 to 14 frames per second on the medium pre-set. But when I went to tweak the in-game graphical settings down from the medium pre-set I found that the game was using custom object and texture quality settings, rather than the medium pre-set that I had selected. Due to the lack of transparency from this phenomenon it’s hard to really say exactly what quality settings the game was running at, but at the very least Crysis is a playable game on the HD 4000.

Here we have yet another in-game screen shot. In this case we’re looking at Crysis running on the HD 4000 using the settings shown in the screen shot above this one. Dropping Crysis down from it’s “Very High” graphical quality pre-set really damages the games texture, water, and lighting quality. But if you are willing to sacrifice the visual fidelity of this game, it can be playable on Intel’s HD 4000.

Brothers in Arms: Hell’s Highway

Brothers in Arms: Hell’s Highway is an older title from Ubisoft that turned out to be a pretty reasonable task for the HD 4000 to conquer. I was able to max out all of the in game graphical quality settings and get about 30 frames a second in 1080P. Game play was smooth and fluid and there didn’t seem to be any rendering issues.

Here’s an in-game screen shot to give a feel for the visuals that the HD 4000 was pumping out in this game. Other than good performance there isn’t a whole lot to report about running this game on the HD 4000.

Total War: Shogun 2

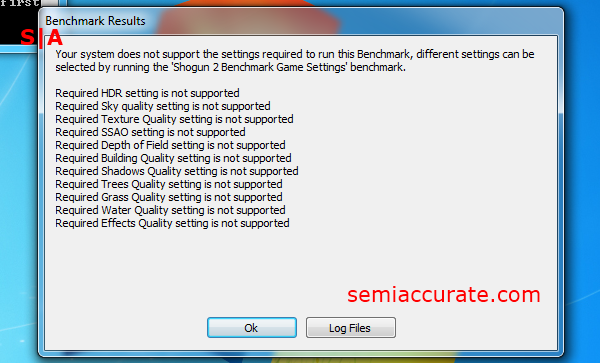

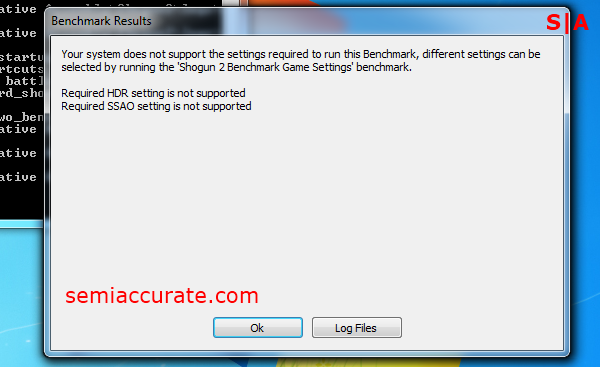

In the case of Total War: Shogun 2 we ran into quite a few problems trying to get it to play nicely with the HD 4000.

The game’s built in benchmarks wouldn’t run due to the small, 256 megabytes of RAM that the HD 4000 sets aside for itself.

We tried to alleviate this problem by playing with the HD 4000 memory configuration in the BIOS. But we found that there were only three options total to chose from, 128MB, 256MB, and MaximumDVT. Of course, MaximumDVT was the default setting we had been running all of our benchmarking with, so due to it being the highest option we were unable to find a way to get around the 256MB sized problem.

And trying to launch the benchmark settings panel resulted in another window notifying me that the HD 4000 didn’t support Shogun 2’s required features, so I should open the benchmark settings panel to disable them. *sigh*

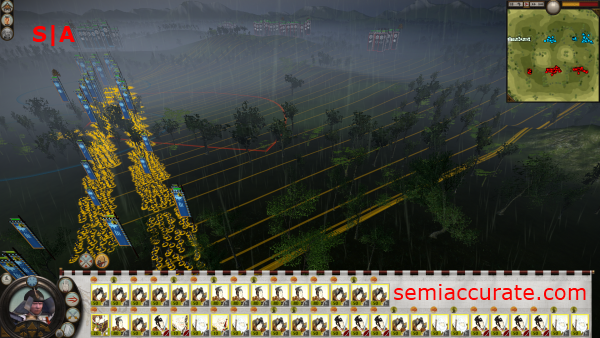

I was able to get the game to run in a normal skirmish though. During my time tweaking Shogun 2 I got the impression that the game didn’t really support the HD 4000 to the same extent that other games had. One of the big tip offs to this notion was the game disabling graphical settings above its low to medium settings. Oddly enough it would let me enable its DX11 mode, as well as its SSAO and Depth of Field functions, but I couldn’t move the unit or sky quality settings above their medium pre-set. Another aberration was the way the units moved while I was testing the game, it seemed to be decidedly out of step with the rest of the game no matter what quality settings I was using or frame rates I was getting. It was as if the moving animation of units was running at half the frame rate of the rest of the game. I’m not sure if this was due to the low quality settings or the HD 4000, or something else entirely, but it was assuredly odd. Despite this the game was still quite playable at medium to low setting in DX11 mode with a resolution of 1080P.

Shogun 2 is one of those games that can offer you really awesome visuals if you have the right equipment. The HD 4000 is not the right piece of equipment to run this game on. As you can see the visual quality that the game forces us to use with the HD 4000 is pretty rough. Additionally, our frame rates dropped into the lower teens as we put more graphical options on their “Medium” settings. So even if we can access the higher end graphical features in Shogun, it’s somewhat doubtful that the HD 4000 even has enough horse power left to produce playable frame rates with those settings on.

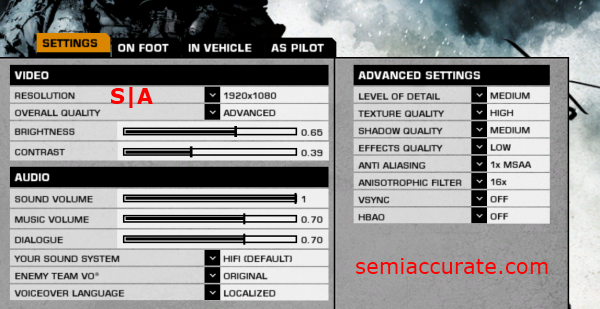

Battlefield: Bad Company 2

DICE’s Battlefield: Bad Company 2 is a game that’s fallen out of favor with many reviewers due to the popularity of its successor, Battlefield 3. But it’s still a decent benchmark for our purposes and one that the HD 4000 is able to cope with reasonably well. I was able to run the game at what I would consider to be medium detail settings at a 1080P resolution. Frame rates were in the high twenties and I didn’t notice any artefacts while playing through the game. Overall the HD 4000 provided an experience competitive with graphics cards from AMD and Nvidia that offer the same level of performance.

As you can see in the screen shot above, even at somewhat reduced settings Bad Company 2 maintains most of its visual quality. At this point I think it’s fair to say that the HD 4000 is performing particularly well in first person shooters as opposed to the real time strategy and third person shooters that we’ve tested so far.

Portal 2

Last year’s Portal 2, from game developer Valve, proved a troubling benchmark for stability on Intel’s HD 4000 graphics.

After about ten to fifteen minutes of game play Portal 2 would lock up. The game wouldn’t crash to the desktop, it would just lock up while the sound looped endlessly, and then I’d be forced to use task manager to kill the game. This sort of mid game play crash occurred three times during my testing. Now I find it a little difficult to believe that a game as popular as Portal 2 wasn’t the target of some driver optimizations and bug fixes on Intel’s part. But clearly the otherwise good performance of the HD 4000 in the game is getting capped by some serious instability on the part of Intel’s graphics drivers.

This screen shot is from about two minutes before the game crashed while I was playing it. As you can see the visual quality is quite high at the settings we hit on the HD 4000, but at the same time if we could have eked out a bit of anti-aliasing it would have gone a long way in this game. In all sincerity, if the game hadn’t crashed as I was playing through its campaign I might not have noticed the instability, and more importantly I might have actually finished the game for once.

Grand Theft Auto IV

The game that gave me the most trouble on the HD 4000 was Rock Star’s Grand Theft Auto IV.

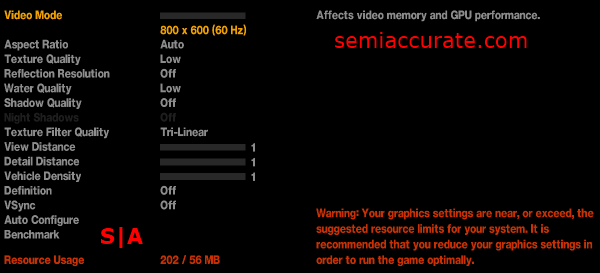

This is a game that is notorious for needing a lot of GPU, and even CPU horsepower, to achieve playable frame rates at its highest quality settings. With this in mind I wasn’t really expecting the HD 4000 to perform all that well, but worse than bad performance I ran into the issue of the game not recognizing the video card and thus defaulting into its lowest quality settings. Even worse than that, the game would automatically reset to those lowest quality settings every time I tried to change a graphical setting from within the game. And yet even worse than that, the game would lock up and crash every time I actually attempted to play it. The only way I was able to get the game’s menu system to run in 1080P was by forcing that resolution as one of the games launch options. But even though I was able to force the game into using the right resolution, it was unplayable due to do the HD 4000 being unable to render even a frame, before crashing, when I tried to start a new game or load one of my old saves. If there was ever a poster child for games that have issues with Intel’s HD 4000 graphics, it would be Grand Theft Auto IV.

Civilization V

Sid Meier’s game of choice is Civ 5 from Fraxis. As turn based strategy games go Civ 5 is as big as they get, it also happens to be a favorite of most reviewers due to its turn based nature and and DX 11 pedigree.

We were able to tweak Civ 5 up to pretty decent settings on the HD 4000. Once again we were using a resolution of 1080P without any anti-aliasing and in DX 11 mode. The HD 4000 handled Civ 5 very gracefully. Image quality was good and I didn’t notice any artifacts. We should note that once again frame rates were rather low, in the high teens when zoomed out and the high twenties when zoomed three fourths of the way in, but the game exhibited no input or animation lag and was completely playable.

The level of visual quality we were able to achieve with the HD 4000 in this game was quite good. Other than the lack of anti-aliasing you would be hard pressed to tell these screen shots apart from a higher end configuration.

Mirror’s Edge

Mirror’s Edge is one of my favorite games, and despite the first-person platforming quirks that this game exhibits, it’s still one of the most creative games that DICE has produced in the last decade.

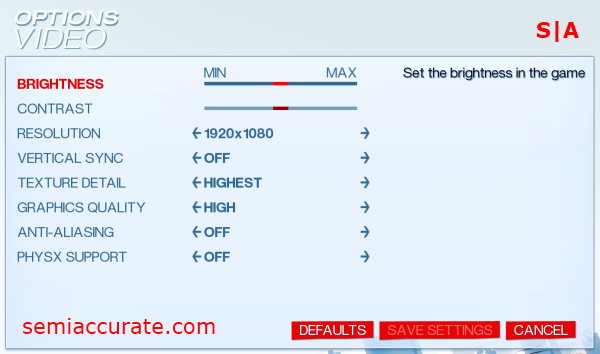

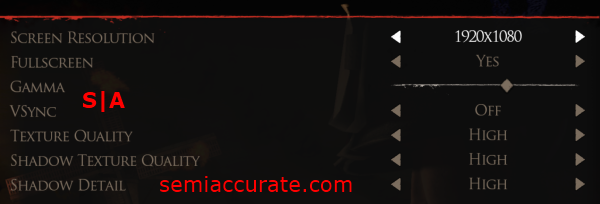

As one of the older games that we’re testing in this article, Mirror’s Edge only supports DX 10. This is one of the reasons that the HD 4000 performs so well in this game. We were able to once again set our resolution to 1080P. Although, we had to drop the graphics quality down to high, disable anti-aliasing, and of course turn off PhysX support, to get playable frame rates. Throughout our time in this game frame rates stayed above 20 and sometimes peaked just above thirty.

Screen shots taken from this game on the HD 4000 look quite good. Despite the fact that we are not running the game at its highest settings, graphical quality remains quite high. The biggest negative about running Mirror’s Edge on the HD 4000 is the lack of anti-aliasing.

Final Words

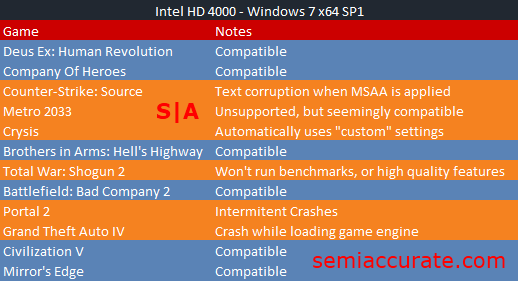

Throughout this review Intel’s HD 4000 has proven itself to be a capable gaming platform that can support* gaming at HD resolutions using reasonable quality settings. The * in this case is that the previous statement is only true if the game that you want to play is supported by Intel’s graphics drivers. Let’s take a quick look at a run down of the games that did, and didn’t work on the HD 4000.

Of the 12 games I tested, the HD 4000 only demonstrated complete compatibility with half of them. This is why I find myself in a difficult position as I try decide on a final verdict for the HD 4000. When a game will actually run stably on the HD 4000 it often produces an experience competitive with discrete graphics solutions, and significantly superior to all of Intel’s previous offerings. At the same time though, it’s hard to shake the feeling that the software stack that Intel’s built for the HD 4000 is an unfinished beta.

Verdict

I for one sincerely wish that the HD 4000’s software side was as solid as its hardware side has proven itself to be. But alas it’s not; and based on Intel’s less-than-wonderful track record on the driver support and development side, I find it hard to really get my hopes up that we’ll see Intel make the kinds of moves it needs to make in order to be a real contender in anything other than the low-end graphics segment. Intel’s HD 4000, despite being superior to any offering that Intel has ever created, is a product that suffers from being the right hardware, shackled to the wrong software.

Pros:

- The latest generation of Intel HD graphics

- Fastest GPU Intel has ever mass produced

- Most attractive driver control panel on the market

- Good performance at 1080P

- Is sold only when attached to High performance Ivy Bridge CPU cores

- GPU based OpenCL 1.1 support

- Full DX 11 support

- Support for twice as many games at launch than last year’s HD 3000

- Ansiotropic filtering is improved from the HD 3000 generation

Cons:

- Only supports 100 games by Intel’s own count

- Is not completely compatible with half the games we tested

- The drivers do not offer many configurable settings outside of the basics

- Ansiotropic filtering is still not perfect

- Late to the DX 11 scene

- Late to fully support OpenCL 1.1

S|A

Thomas Ryan

Latest posts by Thomas Ryan (see all)

- Intel’s Core i7-8700K: A Review - Oct 5, 2017

- Raijintek’s Thetis Window: A Case Review - Sep 28, 2017

- Intel’s Core i9-7980XE: A Review - Sep 25, 2017

- AMD’s Ryzen Pro and Ryzen Threadripper 1900X Come to Market - Aug 31, 2017

- Intel’s Core i9-7900X: A Review - Aug 24, 2017