![]() Hot Chips 24 had their usual tutorials on the first day, and this year the second topic, chip stacking, was of particular interest to SemiAccurate. There were five companies, AMD, Amkor, Qualcomm, UMC, and Xilinx giving seven presentations which we will summarize and analyze.

Hot Chips 24 had their usual tutorials on the first day, and this year the second topic, chip stacking, was of particular interest to SemiAccurate. There were five companies, AMD, Amkor, Qualcomm, UMC, and Xilinx giving seven presentations which we will summarize and analyze.

The idea behind chip stacking is an easy one, take multiple small dies that are easy to make and combine them in to one unit. You can stack chips from different and incompatible processes, have massive die to die bandwidth, save power, save cost, and do things that are flat out not possible with a large monolithic die. In short, it may not be silicon nirvana, but it is as close as we will come in the near future.

Unfortunately, the problems are as severe as the benefits are positive, if not worse. In fact, the most important problem, we can’t actually make a 3D package with high wattage silicon, is quite the show stopper. From there, the near complete lack of design tools, nonexistent standards, and no industry knowledge base are nearly as crushing. That said, innovation and progress are moving things along, and last year, Xilinx came out with the first real high wattage 2.5D device, a four die FPGA on an interposer. This might be the reason the company had three talks at Hot Chips, they are doing it, others are talking.

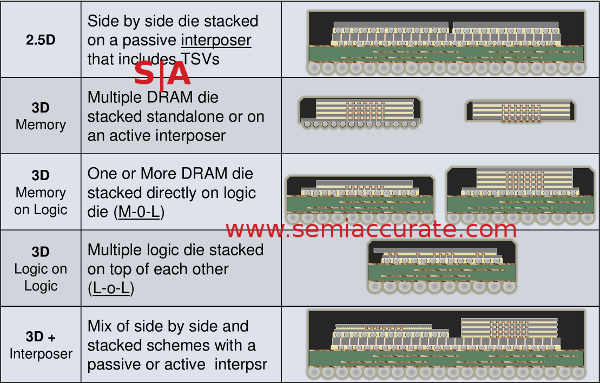

Types of stacks from Qualcomm’s presentations

One of the first problems that you run in to when talking about stacking is simple, terminology. In recent months, that has become much less of an issue, interposers are now considered 2.5D, die on die is generally thought of as 3D. As you can see from the picture above, it can get complex from there with multiple types in a single package. In the end, those two definitions are the ones that really matter.

So, why do we need stacking? What does it do that a single monolithic die can’t do better? There are two main trends coming about because of Moore’s Law, I/O density and power use. Couple that with the economic reality that bigger chips are harder to make, and you are headed toward a very bleak future. Transistor density may double every 18 months, but we are at the point that they don’t actually do anything useful. Why? Lets take a look.

The first problem is I/O density, commonly referred to as pins or bumps, has to increase in proportion to the transistor count. The latter doubles every 18 months, the bumps go up at a much slower pace. Bumps are tricky to design, they are electrical contacts, thermal conduits, and mechanical attachment points, all in a single chunk of solder far thinner than a human hair. If you engineer them right, all is well. If you don’t, you have catastrophic failures, just ask Nvidia.

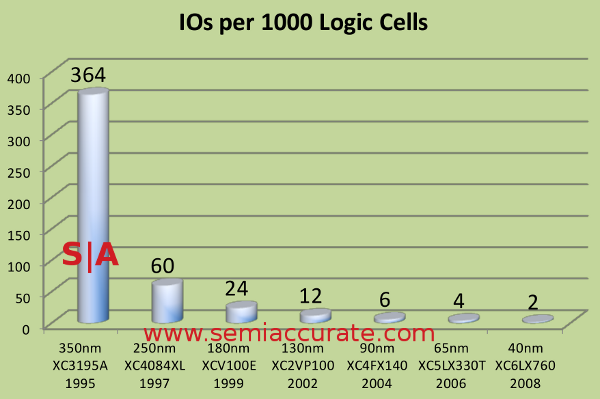

I/Os per 1000 logic cells for Xilinx FPGAs

As you can see from the above slide presented at the Xilinx talk, the I/O count per 1000 logic cells has gone from 364:1000 to 2:1000 over the last 15 years. While each I/O may have gotten faster during that time, so has each logic cell. Modern chips, be they DSPs, SoCs, CPUs, or GPUs, are starved for I/O.

Even if you can physically fit that many bumps on the bottom of the die, you have to route them all through the package, the board, and to things around the socket. The longer you make the traces, the slower they go, the more noise they generate, and the more power they consume. If you can make things short, you win. More traces to route means more length, board layers, cost, and headaches.

That brings us to the next problem, the power consumed by the I/Os obviously goes up with their count, all other things being equal. If you can slap 10x the memory pins down, you will use 10x the I/O power for memory. The power of each I/O is also proportional to the RC (Resistance Capacitance) of the link and it’s speed, so faster and more complex routing equals more power. After a point, more speed simply burns more power than adding another pin.

Then the is the problem of simple chip building economics coming to bear. Yield goes down roughly in proportion to the square of the die area, so a 100mm^2 die will have 4x lower yields than a 50mm^2 die. There are lots of mitigating factors, but the basic idea that bigger is more than linearly worse than smaller is unavoidable. It isn’t hard to see how four smaller dies could make more sense than one bigger die.S|A

Note: This is Part 1 of 2.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024

- Why is there an Altera FPGA on QTS Birch Stream boards? - Mar 12, 2024