It looks like Calxeda has a Silver Lining with the release of their new SLS FIA-2100 fabric switch. SemiAccurate was quite surprised to hear that it would be first paired by Silver Lining Systems with the new AMD A1100.

It looks like Calxeda has a Silver Lining with the release of their new SLS FIA-2100 fabric switch. SemiAccurate was quite surprised to hear that it would be first paired by Silver Lining Systems with the new AMD A1100.

You might recall from our earlier article about Silver Lining Systems, they bought up the assets of Calxeda and promised to keep things going. They did and have a white box ARM server with the second generation A15 based ECX-2000 server called A300. This 2U server has 48 ex-Calxeda SoCs on board with the fabric, interconnect, and management software mostly developed by pre-meltdown Calxeda. While the CPU silicon may have underwhelmed, the fabric and management software was top-notch.

Step forward to the new Silver Lining Systems and their new FIA-2100 fabric switch, essentially an EXC-2000 with the cores removed. It comes as a PCIe card which looks to the host system like a standard NIC with a local switch. These can be chained together like the old Calxeda fabric, mainly because it is the old Calxeda fabric. Since the topology is programmable you can do anything you want from rings to trees and even a rack scale backplane. It supports failover, redundancies, and all the usual advanced features you would expect.

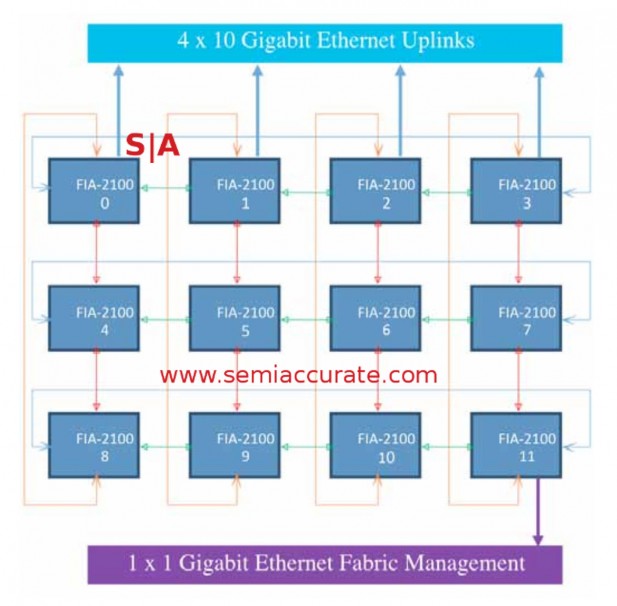

A basic FIA-2100 fabric block

The default topology is two sets of 12 sockets arranged in the 3×4 torus above. Each set of three servers has a 10GbE uplink to a standard top of rack switch, in this case a Cisco Nexus 3524, the fabric takes care of the other links. This direct socket to socket interconnect saves a ton of money, the fabric adapters are cheaper than a standard 10GbE adapter, per port, and the savings on the top of rack switches adds up fast. On top of that you get potentially better failover and management through the old Calxeda architecture and software.

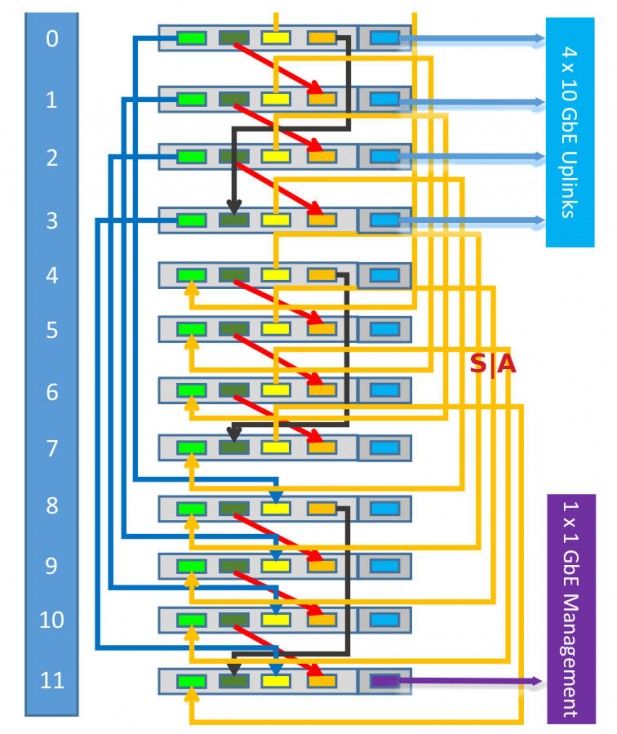

A rack of ex-Calxeda fabric devices

Silver Lining System’s pitch is that you use less expensive cables, less expensive adapters, and fewer of both, plus the real expense of ToR switch port counts are lower as well. The FIA-2100 cards connect to the CPU with standard PCIe2 so nothing more complex than a driver is needed for the network. SLS is claiming that Xeon-D, Denverton, and AMD A1100 will work, as should just about anything else that is PCIe compliant.

The real benefit to all of this is to AMD though, it changes the game for their datacenter chances. No, it does more than that, it takes Seattle from a curiosity to a contender.

Note: The following is analysis for professional level subscribers only.

Disclosures: Charlie Demerjian and Stone Arch Networking Services, Inc. have no consulting relationships, investment relationships, or hold any investment positions with any of the companies mentioned in this report.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024