Last week Qualcomm gave us numbers for their new Centriq 2400 line of CPUs and they are better than SemiAccurate expected. More importantly Qualcomm comes uncomfortably close to Intel’s performance in the meat of the market.

Last week Qualcomm gave us numbers for their new Centriq 2400 line of CPUs and they are better than SemiAccurate expected. More importantly Qualcomm comes uncomfortably close to Intel’s performance in the meat of the market.

Lets start by saying that most of the numbers provided are SPEC scores of one sort or another which may or may not apply to your workloads, reality, or anything else. That said SPEC is about as good a comparison as any for broad based performance in this class of multi-core device. Once you get above a small handful of cores, your workload obviously scales so it is all about socket throughput as we said years ago.

Since we are on caveats we will add one more, all the SPEC scores Qualcomm presented are measured but not official, aka estimated, because they have not been officially submitted yet. Expect them to be submitted soon if they are not already and be ‘official’.

The lineup all of three deep

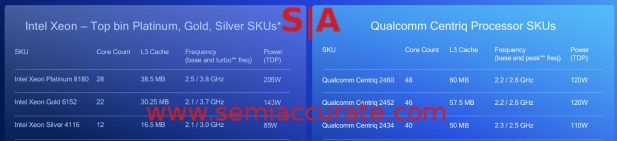

What did Qualcomm release? Three Centriq 2400 SoCs, the 48C 2460, 46C 2452, and the 40C 2434. The above chart lines each of them up to what Qualcomm thinks is the equivalent Xeon but there is little knowledge to be drawn from this lineup. The specs for the Centriq line also don’t shed much light.

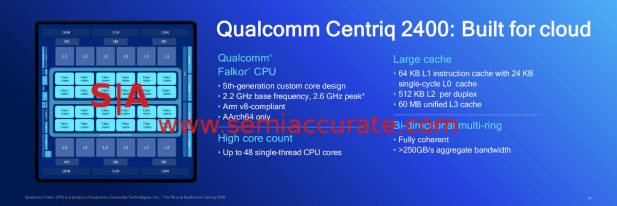

Centriq specs

The base clocks of the Centriq CPU are roughly in line with the equivalent Xeons, as are the thread counts. Intel wins hands down on turbo clocks but Qualcomm added the caveat of “consistent peak” likely meaning all cores turbo to 2.6GHz and do so very often. That said there is once again little to be gleaned from this set of numbers. Cores and threads are nice but if you can’t feed them, who cares how fast they could go. Interestingly for the same rough thread counts, 56 for Intel, 48 for Qualcomm, both chips have the same memory layout of 6x DDR4 channels.

Centriq Core Performance

Now we start getting to the good stuff, performance. We will note that this is against the Xeon 8160 not the top line 8180, in order to make the thread count the same, 48 vs 48. In this case Qualcomm wins by a bit, 7%. To save you the math, 56/48 = 1.1667 or ~17% higher performance. Since the 8180 has a 2.5GHz base clock vs the 2.7GHz of the 8160, the bigger 8180 should come in at 662 or roughly 1% better than the Centriq 2400.

Now Socket Performance

Now we can take a bit more from these numbers. On a per-socket basis the Centriq 2460 is roughly equal to a Xeon 8180 and a bit better than the number two 8160 in raw throughput. On a per-thread basis, Qualcomm’s Falcor cores are a bit better than an Intel thread by about 7% so a pair of Falcors (Falci?) is better than an Intel core. This may seem silly but it comes into play later. Similarly a pair of Falci is more efficient than a Skylake core, 205W for Intel, 120W for Qualcomm to do the same work more or less.

And now for fuel economy

Qualcomm claims 45% better performance per watt than the Xeon 8180, 32% better than the 6152, and 31% better than the 4116. Unfortunately Qualcomm not only cooked the books there, they openly admitted to doing an unfair comparison here. This opportunistic benchmark enhancing comes from the fact that Centriq is an SoC without a chipset, Intel needs at least one chipset per system for Xeons. That takes power, board area, and cost that Qualcomm doesn’t incur. If you add this in to the figures above, Qualcomm comes out looking much better on performance per Watt than they showed, they underestimated their own advantage like Intel used to do back in the good old days. The take home message here is Qualcomm is probably better than they say, at least on power.

The real world meets PPW

One more point to talk about, average power. The 2460 is a 120W SoC but on SPECint_rate2006 it pulls about half that, 65W median on an 8W idle. While they didn’t put up the Intel numbers for an equivalent CPU, we would expect Intel to be in the same utilization ballpark as a percentage, aka much higher average. Then you add in the chipset for another 10W or so and Qualcomm has the clear advantage. Trying to get technical information out of Intel lately is about as productive as training a brick so I don’t think we will ever have hard numbers here. Also think back to turbo, if a Centriq pulls ~65W on SPEC, it is probably turboing to 2.6GHz for the majority of the run.

Now we get to the interesting bits, money. Since Qualcomm is a single socket SoC that doesn’t need a chipset, it will have a lot lower platform cost than an equivalent Xeon. Even if there was a 1S Xeon 8180, it would still have to carry over much of the cost of the multi-socket beasts. That said the 81xx line of Xeons scales to 8C systems, Centriq can’t do 2S. This is a massive competitive advantage for Intel and excludes Qualcomm from the top end of the market.

It all comes down to money

That said Qualcomm seems to have a serious price advantage over Intel, and by serious we mean a multiple. As you can see from the above the lowest end Centriq costs about half of what the (Qualcomm picked) equivalent Xeon does, on the high end that goes up to a 4x advantage. This is before the cost of the chipset, not trivial mind you, and the board, system, and support are added in.

Qualcomm’s advantage should grow there but diminish as the price of the whole box, memory, storage, and the rest, are tallied. For out the door cost, Qualcomm should have a net advantage big enough to be quite relevant in purchasing decisions but not a multiple on the system level. Mainstream memory and storage aren’t really expensive but there are a lot more DIMMs than CPUs in an average system. Once you add in running costs, aka electricity, Qualcomm’s TCO advantage grows once again.

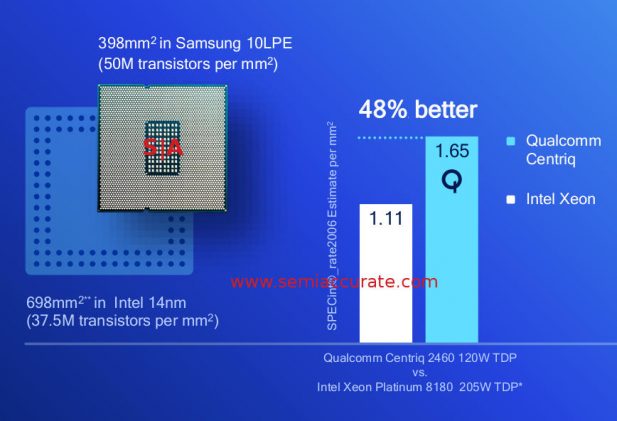

It all comes out with the die

So how does Qualcomm do it? By making a better chip for starters. Centriq 2400 is a full SoC and it is built on a much more efficient process, Samsung 10nm vs Intel 14nm. Intel claims to have the process lead but they are, well, being a tad deceptive. As you can see from the above, Intel claims leadership, but the similar performing and much more efficient Centriq 2400 is ~57% the size of the big XCC Xeon die. A bit scarier for Intel is that the Centriq had a 33% advantage in transistors per mm^2.

In semiconductors, size * process cost = cost, assuming similar yields which given the core counts is a pretty good assumption here. Intel claims the lowest process cost but they also claim to still be ahead in process tech. One of these is provably wrong, the other doesn’t have enough data disclosed to realistically verify so make of the claims what you will. Three years ago we would have believed Intel but the company has come a long way since then.

All of this is rather irrelevant in the face of real world workloads. As we pointed out at the start, SPEC may or may not relate to what you do, what anyone else does, or anything in general. Qualcomm presented SPEC numbers because they can’t put out proprietary customer data and SPEC is about the next best thing. Lets call it the least bad enterprise/server benchmark out there. What we really need is customers talking about their workloads.

Customer Data in the real world

The above slide is from Cloudflare and it is just that, their actual workloads tested on a Centriq 2452 vs 2x Xeon 4116’s. The Xeons are what Cloudflare actually has deployed, the Centriqs were a test deployment. So in a real world test by a large provider, the Qualcomm part lined up between a Broadwell and a Skylake system, lets call it ~10% slower in the real world. That compares favorably to the SPEC numbers too, but do note this is not the same comparison Qualcomm used above.

More importantly the Centriq does it’s job while pulling roughly half the energy on the CPU side. This again is about what we speculated it would be above. More to the point, the system power, as shown by the rack counts on the right, gives Qualcomm a huge running cost advantage. In short the Centriq system is (likely) cheaper to buy, cheaper to run, able to use less floor space (~= cost) in the datacenter, and runs the same workloads. In at least one real world situation, Centriq lives up to it’s claim. Talking to other customers at the Qualcomm launch event, they all made similar claims of higher efficiency too.

Taking a hard turn we come to one hot topic on the minds of all data center customers of late, security. We all know Intel doesn’t take security seriously, they refuse to do the right thing which leads to massive breaches. Qualcomm does something interesting here, they put the hardware root of trust on die, not on package or on system, but on the CPU itself. They can do this because the system is a true SoC, no chipset needed. This won’t prevent hacks like the Xeons have seen multiple times, but it does preclude one class of attacks, inter-chip communication. Hopefully Qualcomm will do the right thing and open it’s security code for the world to audit too. *HINT*

So in the end what do we have? Qualcomm made a Centriq SoC that is cheaper to make, cheaper to buy, much cheaper to run, and just as fast as the best Xeons out there. The down side here is that the Xeons scale up to 8S while Qualcomm is stuck on 1S systems, but in the current world of mid-double digit core/thread counts, that is becoming less and less of a handicap. It hurts mainly on workloads that need more memory space, something a second socket doubles, but is brought back by Intel’s $3000 memory tax.

Even with this in mind, for the majority of the market, Centriq should be a clean kill. The 4x price advantage puts the pricing of a 2460 in the $2500-3000 range vs $10,000-13,000 for the Xeon. This is more than enough of an advantage to tempt buyers and that is before the ‘extras’ like cache QoS software which Intel charges for but Qualcomm includes for free. SemiAcccurate expects Qualcomm to do quite well with the Centriq line, but as usual with the megadatacenter crowd, large deployments take time. By this time next year, expect Intel to be taking large hits from at least three sides, Qualcomm being one with Centriq 2400.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024