The launch of AMD’s Fury X is easily one of the most anticipated events of this year. Coming on the heels of the launch of Nvidia’s GeForce GTX 980Ti which set a new benchmark for high-end value AMD’s Fury X has its work cut out for it. This time around AMD is attacking the high-end with a bigger focus on industrial design and build quality. Using the concepts of premium build quality, it first realized with the R9 295 last year AMD has created the Fury X to offer viable competition to Nvidia’s GTX 980Ti and Titan X in every measure.

The launch of AMD’s Fury X is easily one of the most anticipated events of this year. Coming on the heels of the launch of Nvidia’s GeForce GTX 980Ti which set a new benchmark for high-end value AMD’s Fury X has its work cut out for it. This time around AMD is attacking the high-end with a bigger focus on industrial design and build quality. Using the concepts of premium build quality, it first realized with the R9 295 last year AMD has created the Fury X to offer viable competition to Nvidia’s GTX 980Ti and Titan X in every measure.

As Charlie detailed on Monday AMD has really upped build quality. From the matt finish and LEDs, to the removable panels and closed loop water cooling, this is as good as it gets. If nothing else the Fury X is without qualification a good-looking graphics card which is a big change of tune coming from the company that just a few years ago blessed the world with graphics cards that looked more like retro bat-mobiles than number crunching monsters.

Physically AMD’s Radeon Fury X is smaller, if rather gangly, graphics card. It’s 194mm long and 102mm tall with 400mm long braided tubes that should allow you to place the radiator pretty much anywhere in a standard ATX case. It’s also two PCI-E slots wide and offers three full-size display port connectors and one HDMI out. The red Radeon logos on both the top and bottom of the card light up when it has power and the fan is inaudible under load in my office at stock settings. Whether or not you believe the criticism that the R9 290X and R9 290 received for their noise profiles was warranted the R9 Fury X is in a different category of noise output all together.

AMD’s Fury X also brings one big software feature to the table: Frame Rate Targeting Control. This is such an important feature that we’ve dedicated a standalone article to it. But the short version is that with this feature you can cap the number of frames the Fury X will render. Modern displays can only display so many frames per second, thus capping the rendering rate of your GPU to your display’s refresh rate can save boat-loads of power without compromising your user experience. This feature is also present on all Radeon R9 and R7 300 series graphics cards along with Virtual Super Resolution support.

When Charlie relayed to me the first details of AMD’s plans for its Fiji chip early last year I was skeptical that AMD could really build a product like this. Especially after the launch of the first Maxwell-based graphics cards from Nvidia it was apparent that AMD needed to make some big advancements to get back in the game. The Fury X is the big advancement that I was waiting for.

Needless to say AMD really need to launch a new high-end GPU as the R9 290 series is going on two years old now. As Charlie detailed AMD’s Fiji encountered delays in its gestation moving from an early 2015 predicted arrival back to the middle of the year. The real question now is when will 16nm chips start cropping up?

In the meantime, we have a 28nm slug fest between two of the largest chips ever created. GM200 weighing in at 601mm2 and AMD’s Fiji with 596mm2 die size. Nvidia has already shown that GM200 can set a new bar for value and performance in the high-end with the GTX 980Ti and now AMD needs to show the world and dog that it can do the same.

From a conceptual point of view Nvidia’s GM200 is the biggest gaming GPU the company could make using a combination of its more efficient Maxwell architecture, slightly more die space over the GK110, and the removal of most of FP64 hardware that was present in the Kepler architecture.

AMD’s Fiji on the other hand uses a slightly more efficient version of its stalwart GCN architecture that incorporates all of the enhancements introduced with Tonga and the R9 285. It retains all of the FP64 compute goodness and offer a more multipurpose solution than Nvidia’s GM200. But it also dispenses with all of the die area needed for a big GDDR5 controller and has gained quite a bit of size over Hawaii.

In the end what we’re comparing are two different visions for what the best high-end GPU should be. An all-out technological marvel like Fiji or a carefully tuned and task oriented product like GM200. All things being equal Nvidia’s vision would probably win out easily if AMD wasn’t so far ahead of it in memory technology.

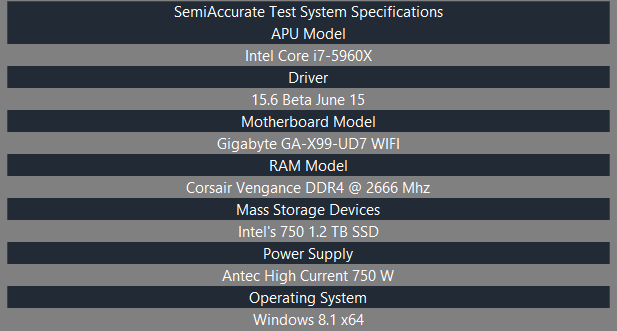

For the sake of transparency, we want you to know that AMD provided the R9 Fury X and R9 290X GPUs we’ll be testing today along with codes for a couple of the games we’ll be looking at. Intel, Gigabyte, and Corsair provided our CPU, Motherboard, and RAM in that order. All the other parts we’re using were purchased at retail without the knowledge or consent of those companies. As always you can find our raw testing data on OneDrive. We took no outside input for this article.

Unfortunately, we don’t have any GTX 980Ti’s on hand to compare our R9 Fury X to. *cough* No idea why that is… In any case we do have an R9 290X on hand so that graphics card will be our point of relative comparison in this review.

Here’s our test bench’s specifications and you can check out our raw data on OneDrive.

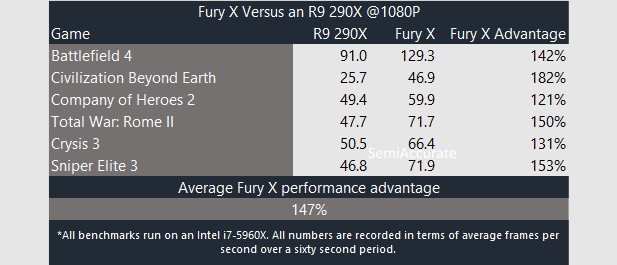

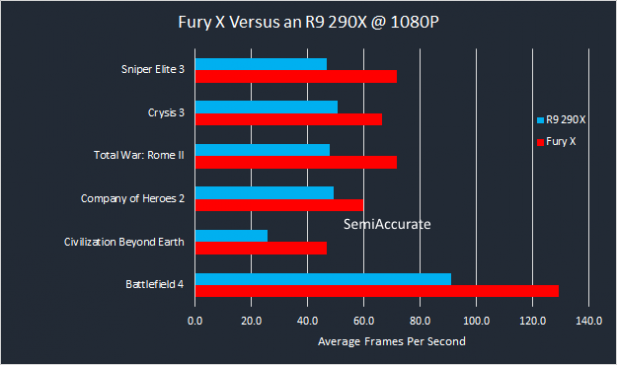

And here are the actual benchmarks we ran.

Look at our 1080P benchmarks we can see that the Fury X is consistently faster than our R9 290X across our suite of games. The gap ranges from 21 percent to 82 percent in modern DirectX 11 games and averages out to a near 50 percent advantage for Fiji over Hawaii in our benchmarks.

This is a solid start. We are expecting big things from Fiji and so far it hasn’t disappointed.

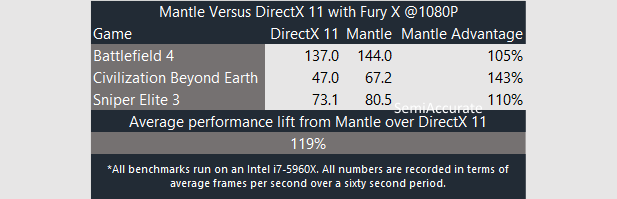

Moving to Mantle versus DirectX 11 performance we can see that our Fury X picks up almost 20 percent more performance by switching to AMD’s own API. This bodes well for performance in upcoming DirectX 12 and Vulcan games.

Thus from a performance standpoint AMD’s Radeon R9 Fury X is a formidable offering. With driver updates and Windows 10 just around the corner we can’t wait to see how Fiji ages.S|A

Thomas Ryan

Latest posts by Thomas Ryan (see all)

- Intel’s Core i7-8700K: A Review - Oct 5, 2017

- Raijintek’s Thetis Window: A Case Review - Sep 28, 2017

- Intel’s Core i9-7980XE: A Review - Sep 25, 2017

- AMD’s Ryzen Pro and Ryzen Threadripper 1900X Come to Market - Aug 31, 2017

- Intel’s Core i9-7900X: A Review - Aug 24, 2017