When AMD (NYSE:AMD) first gave out power use numbers for Llano, I was far beyond skeptical. After having played with the chip for a few days, I can only say that the new Llano/Fusion architecture is a game changer, or at least the first step in a new post-CPU world. It really is that significant.

When AMD (NYSE:AMD) first gave out power use numbers for Llano, I was far beyond skeptical. After having played with the chip for a few days, I can only say that the new Llano/Fusion architecture is a game changer, or at least the first step in a new post-CPU world. It really is that significant.

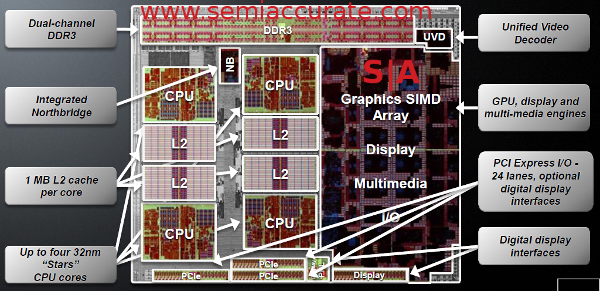

Lets start out with what Llano is, and what Llano isn’t. From a high level, the chip looks like an old AMD quad core K10.5 with 400 ‘Evergreen’/HD5xxx shaders bolted on, and a UVD3 video block thrown in for good measure. Add in a second generation ‘turbo’ mechanism, and build it all on Global Foundries new 32nm HKMG process, and you have what AMD is calling Llano. So far, so ‘meh’, nothing you can’t do with a CPU and a cheap GPU card. In this case, the devil is in the details, not in the high level blocks.

The core blocks for Llano

The core itself is a heavily massaged Phenom II/K10.5/Stars core, with no transistor left untouched, or at least un-looked at. The core itself was shown off at ISSCC a little over a year ago, and we went in to great detail here. On Llano, the core tweaking wasn’t about more speed as is usual. This time, it is all about power use, and AMD succeeded in a big way.

Lets be blunt, the AMD K10.5 core, a derivative of the K10 first introduced in Barcelona, was a pig in terms of power use. When it debuted several years ago, it was late, hot, and far from cutting edge. Since then, the state of the art has moved on, new techniques came out, and for one reason or other, didn’t make it in to any AMD CPU.

There were good scheduling reasons for this, but that back story is for another article. Intel set the standard for mobile CPUs and power use, and AMD wasn’t in the game. With Llano, AMD heavily revamped the K10.5 core, and brought it up to modern standards for power, updated the cache, and cleaned up a few lingering performance issues.

For the core, the biggest thing was the L2 cache going from 512K to 1M per core, something needed because Llano has no L3. To better utilize this, the prefetcher was also beefed up, as were most of the buffers. Some of the ops were also massaged, and the end result is a claimed 6% IPC improvement. While any update is welcome, the core itself nowhere near the Sandy Bridge/iSomethingmeaningless 2xxx, closer to half of it’s performance than even with it. 6% isn’t going to make a difference. This would be the ‘meh’ part.

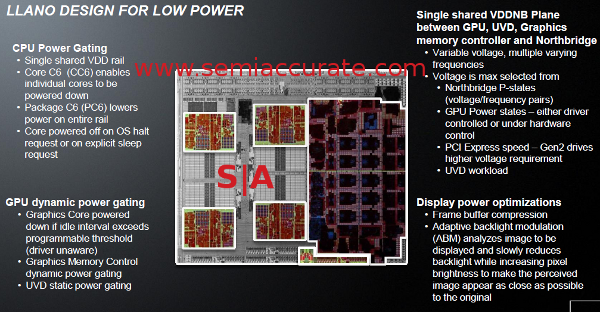

Some of the power tweaks

For power savings, the list is much more extensive. The biggest bangs are of course the 32nm HKMG process and the new C6 sleep state. This is something Intel has had forever, and essentially lets the CPU power down completely when not in use, and do so in a very granular fashion. Now, instead of having the two or three idle cores sucking power all the time, they can turn them off. Completely off, no power at all, unlike the previous hot off states.

There are two C6 states, core C6 and package C6. The problem is that there is only one voltage rail for all four CPU cores, so you have to gate off the cores in C6, but can’t vary the voltage to individual ones in higher C-states. This is a cost/complexity trade-off, and probably the right thing to do from a system design perspective.

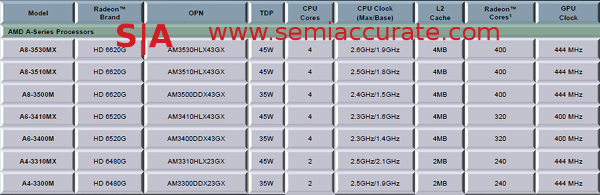

Moving on to the GPU, the macro level is very similar to an ATI Redwood core with UVD upgraded from that found in 5xxx chips to the one in 6xxx GPUs. The end result is a few less shaders than an HD6570, but those shaders are more tightly coupled to the system. AMD calls the GPU in Llano a 6620G, and that seems like rational place to put it, about in line with the mobile equivalents. We expect the desktop parts to be named similarly.

If you recall, the recent AMD GPUs have been massively power gated, monitored, and capped, the HD6970 being the current high water mark with it’s PowerTune setup. The new methodologies behind PowerTune, basically measuring the work being done and looking up how much power that uses, have been added to Llano as well. You have the normal GPU sleep states, power monitoring, power gating, and a lot of more subtle low level optimizations.

These methods are carried over to the CPU side as well, basically it is a single PowerTune setup for both CPU and GPU, not two discrete units chained together. According to their ISSCC 2010 talk, the digital monitor compares 95 signals across each core about 100 times a second, and has greater than 98% accuracy in less than 10µs. This is more than adequate to deal with thermal management for both sides together.

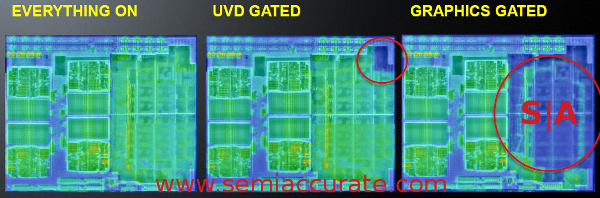

The GPU blocks, the northbridge, and the UVD cores are not on the same power plane as the CPU, they are separate. When the computer is idle, the entire CPU can go in to C6 sleep, power down, and the GPU will stay away. Meanwhile, the GPU can read from memory, keep the screen refreshed, and spit out frames without waking the slumbering CPU.

Same for the UVD block. You can theoretically play an entire movie with the CPU asleep, and this saves tons of power. Add in power states under both hardware and software control, and you have a power sipping system. While not directly under the aegis of Llano power, the GPU can also pull some power saving tricks like frame buffer compression and adaptive backlight control to minimize system power use.

IR shows how details make a BIG difference

Once again, the entire thing seems like a GPU bolted to a CPU, and both brought up to modern standards for power management. From the 10,000 foot view, this is correct, but like we said, the devil is in the details. The first of these is turbo, or more accurately, how turbo on the CPU interacts with the turbo on the GPU.

Any gamer out there, or anyone who has played with benchmarks knows that you will always have a bottleneck in any piece of software. A workload may be CPU bound, GPU bound, or memory bound, but it is almost never bound by more than one thing at the same time. Llano’s turbo uses this little quirk for greater performance.

On the surface, the idea is deceptively simple, the chip has a TPD, lets say it is 45W. For the sake of argument, lets arbitrarily say that the CPU cores take 30W, the GPU 15W, and the rest of the chip effectively nothing. Having all four CPU cores working at once is a vanishingly rare circumstance, but all 400 GPU cores simultaneously active is somewhat common.

On a non-Fusion architecture, this would mean that the CPU can simply power down a core or two, and use the TDP overhead to overclock the more active cores. This is a nice concept, but if you have two or three cores idle, you probably don’t need that much more headroom on the one being thrashed. Totally serial applications are possible, but with anything resembling modern optimizations, this is fairly unlikely.

A much more common scenario, games being a common example, is that you are very GPU bound. You can have all four cores either very lightly loaded or idle, but performance is totally capped. This means you have most of the CPU power allocation not being used. Similarly, if you are not gaming, but crunching numbers in OpenOffice, or doing hardcore calculations, your GPU is sitting there poking along at a fraction of it’s TDP.

Normally, this is a good thing, you are saving power, longer battery life and all that. Cue the singing polar bears. On a non-Fusion machine, that power is saved, and the idle unit waits for the bottlenecks to ease up, one can not help the other.

With fusion, one of those details is a kind of load-balancing with power. Since the two units are on the same die, they can talk with very low latency, and possibly share power control circuitry. If the chip has a 45W TDP, and the CPU is only using two cores, it can be simplistically seen as pulling 15 of the 30W allocated to it. The other 15 are available, so the GPU can take that and up the clocks to work through the bottleneck faster. With a discrete CPU and GPU, this would be impossible.

With PowerTune, AMD has all the needed parts in place to make this scheme not just work, but work better than having two discrete parts. If you set the GPU power capping conservatively, upping the TDP isn’t as much overclocking the GPU as it is removing the underclock. Evergreen/5xxx cores are able to run at 800MHz all day, so the 400-ish MHz of the Llano cores are not pushing the performance envelope. Turbo is almost trivial with the GPU blocks, even before the 40nm -> 32nm shrink.

The CPU side is similar, but not as dramatic. The K10.x cores run much faster than the mobile Llanos, so bumping the clock up quite a bit is not going to be much of a stretch. Take a look at the frequency tables for the mobile parts, the turbo speeds for the cores are perilously close to 50% higher than base.

Note the Turbo percentage, and the GPU speeds

Compare that to the 500MHz max for current Phenom II/K10.x cores running at around 3GHz base, you are looking at three times the speed-up. That is significant, and something you can’t really do well without the close coupling of CPUs and GPUs. It also bodes well for certain hidden modes in upcoming CPU architectures. To achieve this, there isn’t anything hugely new, just the smart application of current technologies along with close integration. This is one of those details with a huge payoff.

The next detail is a big one, possibly the big one, the new memory controller. In case it isn’t obvious, the memory controller on older AMD CPUs wasn’t designed to be directly connected to a GPU. There are major differences between how the two access memory. Differences in the caches both sides have or do not have. Differences in how sequential memory accesses are. To say the two are different is understating things.

If you look at things from a purely statistical perspective, the new memory controller in Llano can support 2 channels (128-bits wide total) of DDR3/1866, 1600 on laptops, up from 1600/1333. That is a nice boost, but not a CPU I/O paradigm shift like going from 64-bit DDR2/800 to 512-bit GDDR5/5GHz. If you are keen on specs, you might have noticed that a Redwood/HD5570 by itself has 1GB of the exact same 128-bit DDR3/1600, exactly what Llano has for both CPU and GPU.

To be fair, Llano’s GPU, at least on the mobile side, is quite a bit slower than Redwood, 400MHz vs 650 in the discrete card. Even then, the bandwidth that Llano has is, on paper anyway, not enough for both units, but it works. How? Magic, elves, Zero Copy (ZC) and Pin In Place (PIP). Although we like magic and elves, we will just tell you a bit about ZC and PIP.

There were several talks on ZC and PIP at AFDS that go in to much more detail, so we will only give you the broad overview here. The idea is to minimize data movement by doing things smarter in software rather than throwing hardware at the problem. Most of this intelligence is in the memory controller, and a few additions here and there can save uncountable numbers of bits moving from one point to another, increasing performance and save power while doing so.

To overly simplify things, Pin In Place does just what it sounds like, it takes a chunk of memory and makes it’s location static. This can save a lot of cycles in moving that data around from one point to another. If a thread knows where something is, or there is a static location for a type of data like a framebuffer or a texture cache, you don’t have to figure out where something is every time you want to work with it. PIP may sound simple, but it can save large levels of latency for both the CPU and GPU side.

Zero Copy is quite similar, and it is about what you probably think it is, doing a copy without doing a copy. To take a simple example, imagine what it takes to put a texture on the screen in a game. You load it from disk to memory, decompress it to another spot in memory, copy it to the GPU memory across the PCIe bus and then read it from that memory to the frame buffer while appropriately twiddling it. Things are much more complex than this, but the take home message is that there are a lot of copies when dealing with graphics.

In a Fusion type architecture, you load it to CPU memory, decompress it, and then the MMU just changes a pointer to ‘move’ it to GPU memory. One calculation instead of moving hundreds or thousands of K. That saves power, memory bandwidth, and time. There are several new busses in Llano, but that’s for other coverage.

Although Zero Copy isn’t allowed yet for good reasons, security being chief among them, it doesn’t take a genius to see where this is going. Once a program can assume that both the CPU and GPU have simultaneous access to the same memory, you can do some amazing tricks. Think about the CPU tweaking textures in ways that are expensive for the GPU to do, in parallel to the GPU doing it’s thing. Live video streaming to textures is not a new trick, but it just got a lot less expensive. All this is out in the future, but the hardware limits just went away.

Both ZC and PIP effectively drop the bandwidth that is used by large amounts. The end result is that you take a CPU that has DDR3/1600 and a GPU that has DDR3/1600, and combine them in to one unit that has GDDR3/1866. Theoretically, that math doesn’t work all that well. The fact that Llano does actually perform comparably to the same level of discrete cards is testament to how well ZC and PIP work.

The last detail sounds really odd when you first hear it, Llano has no L3 cache, and that is a good thing for power use. No, it has nothing to do with die size, yield, or cost, it is all just about power use. One of the problems that AMD has is that their cache is exclusive, meaning what is in the L2 is not duplicated in the L3. Intel uses an inclusive cache, so what is in the L2 is copied in the L3.

Without going in to a huge debate about caches, there are good reasons to implement both schemes, it is one of those classic trade offs. Exclusive caches do not duplicate L2 data in L3, so you effectively get more usable L3. If you have 4 512KB L2 caches and one 2MB L3, you have effectively 4MB of usable system cache. The same in an inclusive system means you need more than 2MB of L3 to get more ‘usable’ space.

The flip side of this is that if you need to snoop the cache of another core, a very common occurrence on modern multi-core CPUs, you only need to look at the L3 cache, anything in the lower levels will be there as well. On an exclusive cache hierarchy, you need to look at the L3, and then if the data isn’t there, you have to check all three of the other L2s as well. Short story, this operation takes much more time, if you don’t find the data quickly, you spend painful amounts of time looking.

Even more problematic is that the L2 cache is considered part of the core, or at least is C6 gated with the rest of the core. If the core itself goes in to C6, so does the L2. If the L2 goes in to C6, it can’t be snooped. Since snoops like this happen millions of times a second, it basically means that with the AMD L3 cache configuration, C6 is impossible. If you could do it, the latency it would add to any snoop, likely hundreds of thousands of cycles, would destroy the chip’s performance.

As an aside, the L2 cache structure of Llano is the first one that AMD has changed from a 6T to a 8T architecture. This is done for very many reasons, but it also suggests that there are bigger changes in store for Bulldozer and what type of L3 cache it has. What worked for AMD the better part of a decade ago may not be right for the next one.

What is you end up with is something that looks like a Phenom II and a HD5xxx/6xxx welded together, but takes less power than either one on it’s own. That is not a minor achievement, but it really works in the real world, and that is what counts. Llano is the first of a new breed, the changes AMD outlined at AFDS are immense, and the things you see are more groundwork than fully implemented plans. More is coming soon, and those just build on the cool stuff.

This brings us to the question of what AMD released, how much do they cost, and how does it actually perform. Both that and more depth on many of the details mentioned above, lightly sprinkled with Onion and Garlic, are coming in the next few articles. Llano may look like a welding of two known pieces, but the end result is anything but. There is lots more good stuff to come here.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024