![]() Note: This is Part 4 of a series, Part 1 can be found here, Part 2 can be found here., and Part 3 can be found here.

Note: This is Part 4 of a series, Part 1 can be found here, Part 2 can be found here., and Part 3 can be found here.

2D has more uses than you think:

Last of the three functional blocks is the 2-D Pipeline and that does the normal functions and one exceptionally nice feature that is almost absurdly useful. There is only one functional block here called the 2D Drawing and Scaling Engine (DSE) but it has two main roles. It draws pictures on the screen and as you might have guessed, it scales them up, down, and in other ways too. It can do both one and two pass filtering if you want the quality but 2D is a pretty solved problem in modern GPUs. Don’t expect fireworks from this functionality.

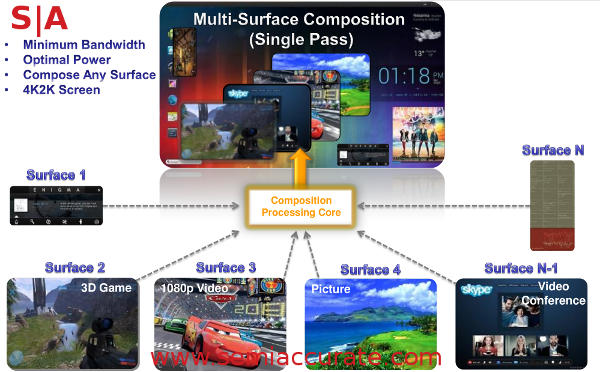

The other thing it does is actually worthy of fireworks, a hardware composting engine. If you are not familiar with the term it means that the Vivante GPU can take an image and overlay it with others on the fly cleanly. Imagine a windowed desktop with several overlapping windows with an active task in each one. This is not a big trick to do with brute force, any modern GPU can do it but mobile GPUs are a bit lower on the brute force side of things and power does matter. With a hardware composting engine you can do lots more than is possible with only raw power and more importantly do it with far lower energy use.

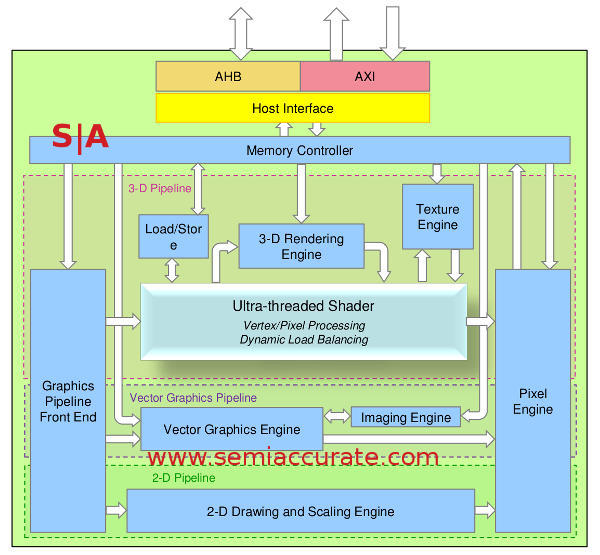

The Vivante GCCORE architecture

Why does this matter? Picture-in-Picture is one good one, video conferencing is another. Then you get in to things like watching a video with an ad overlay. And a GUI overlay, not to mention a web info overlay, chat pop ups, and the rest. In the impending world of ‘enhanced’ videos and UIs, composting is not just a nicety it is required and that is one thing Vivante does really well. Chromecast anyone?

This little mobile GPU has some over-sized composting abilities and is capable of doing composting with 2K and even 4K images. The current device can do 8 layers but 16 is possible with multiple cores if you need that many. The composting engine can also seamlessly blend different color spaces like RGB and YUV on the fly. If you look at a web page with a simple overlay, a video or three, pop ups, and the rest a modern teenager likes to do, you can see how 8 spaces would be eaten up in a hurry.

Composting on conceptual level

If you don’t think this matters and brute force is good enough there are two data points that may change your mind. On a Kindle Fire HD 8.9″ with the TI OMAP 4470 SoC that has the Vivante composting engine, blending eight 1080p surfaces takes 180mW on the 3D core and only 40mW with the composting engine. It is completely brute-forcible but at almost 5x the power, hardly ideal for a mobile device. Scaling and Rotation tests are 70mW on the 3D core, 7mW on the Composting Engine. Likewise running a GUI is 60mW on 3D, 10mW on composting hardware. Better yet the composter has a claimed 4x performance and half the bandwidth usage so there is no real down side.

Speaking of the Kindle fire HD, the 7″ version has an OMAP 4460 without a composting engine while the 8.9″ uses a 4470 with the engine. The 7″ is a 1MP screen and the 8.9″ is a 2MP screen but the 8.9″ chews the 7″ up for image and text rendering performance because of the overhead not needed to do the composting on the main GPU. On 2D work it does make a big difference if the UI takes it in to account and Android seem to do just that.

Overall the 2D unit may seem a little tired and yester-tech and without the composting engine that would indeed be an accurate description. The Composting Engine is however exactly what the modern multitasking, multi-threaded, multi-everything modern use case world demands. As far as we know there isn’t anyone out there that does composting on this level, quite the oversight considering how any modern UI lives and dies by these techniques.

And Pixels Go Out:

The last part is what all three blocks, 3-D Pipeline, Vector Graphics Pipeline, and 2-D Pipeline all feed in to. As you can see from the system diagram, this block is called the Pixel Engine. Other than putting out pixels, what does this block do? Blending, depth and stencil computations, MSAA, and detiling/resolve. That last one effectively turns chunks or tiles, if used, in to a linear frame buffer for display output. There isn’t anything out of the ordinary here other than the same unit potentially taking feeds from three units.

Miscelania:

There are a few more interesting bits to talk about starting with the configurability of the GCCORE itself. As you can tell from the previous parts of this story the Vivante GPU architecture can be chopped up at a very granular level to meet OEM requirements. As we said earlier there are few features that depend on what the SoC maker wants to implement especially on the power side.

The most important of these features is voltage planes of the GPU itself. Each of the three pipelines is marked out with a slightly different background color and those more or less connote the parts that can be on different voltage planes. While most SoCs don’t have this implemented, likely for cost reasons, there is no reason that it can’t be done. How much power savings this would bring and what the tradeoffs are were not disclosed but probably fairly minor.

Discussing this brought up another unusual point related to pipelines and multi-core GPUs. Instead of a vendor implementing a hypothetical three pipeline GPU a vendor could implement three one-pipe GPUs, one 2-D, one 3-D, and one Vector or any combination of those. This would let them do some pretty impressive power gating for a low implementation cost. When the units in question were not being used instead of turning off some or all of a pipeline they could turn the whole thing off from the I/Os to the Pixel Engine. Vivante says the multi-one-pipe type GPUs are currently being implemented so there looks be some merit to it. Even if it is a minor implementation win it is nifty.

One thing you may have noticed is absent is any mention of a video encode/decode block mainly because there isn’t one in the Vivante lineup. For the last five or so generations the desktop GPUs have had video encode and/or decode to one extent or other but it is almost always a discrete block in the GPU itself. Mobile GPUs have their video units as a separate block but the difference is that until recently the GPU and video blocks tended to come from different IP houses. Intel bought Silicon Hive for their encode/decode tech and ARM just announced their own internal unit called the V500. Vivante has no such block.S|A

Note: This is Part 4 of a series, Part 1 can be found here, Part 2 can be found here., and Part 3 can be found here.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024