Lets face it, the current state of high rez monitors is miserable enough for AMD to finally push a solution now called VESA Display ID v1.3. With luck hardware supporting it will hit the market sooner rather than at DP1.2 speeds.

Lets face it, the current state of high rez monitors is miserable enough for AMD to finally push a solution now called VESA Display ID v1.3. With luck hardware supporting it will hit the market sooner rather than at DP1.2 speeds.

Yes they gave a full solution to most of the 4K standard misery to VESA and now it is a standard, but what did AMD give and why does it matter? If you have been following the painful and miserable state of high rez panels, you will know about DVI, DP-DVI, HDMI, and DP in the various flavors. Lets take an overview look at the current state of things and how broken it is.

DVI was the mainstream monitor connection that brought PCs in to the digital era after analog VGA ran out of steam. It worked well for years but it only went up to 1920 * 1200, if you wanted more like a 2560 * 1600 30″ monitor you needed two cables. Enter DL-DVI or Dual Link DVI, it simply ran two DVI signals over one cable along two sets of pins. This allowed the monitor to tile that 2560 * 1600 signal in to two 1280 * 1600 or 2560 * 800 images. Since it was known that the two signals would come down one cable, it was pretty easy to say signal #1 is the left, signal #2 is the right. It worked well, seamlessly in fact.

HDMI in later iterations could do 2560 * 1600 natively so all was good, other than HDMI being a dumb royalty bearing license that excluded those not in the club. DP was the solution, it was both technically far better and came without the onerous royalties. For some odd reason the TV makers, most of whom had some revenue on the line from HDMI royalties, balked at the idea of a non-royalty bearing standard and did their best to stymie it.

PC makers, Dell in particular, didn’t like the idea of paying a very large and pointless per-port royalty to consumer electronics consortia for every monitor and video card they sold so they pushed DP over HDMI as best they could. Short story is that a standards war ensued and the technically vastly superior and cheaper DP standard was common on PCs but not on consumer devices, same for the inverse. It sucked for everyone and slowed down progress to a painful degree.

This lack of progress meant 2560 * 1600 aka 4MP monitors were both agonizingly expensive for far too long and had slow uptake for the same reasons. With panel pricing dropping 30% or so a year, panel makers were facing the commodity pricing death spiral and panic was creeping in. How do you get consumers to upgrade a big 1080p panel if there is no higher rez standard? How do you get them to upgrade if what they have is nearly perfect and as big as is practical for most homes? 120Hz panels were one attempt, 3D was another, and a few other techs died a lonely but extremely well deserved death. 40-50″ panels went from $20K to 10K and now are well under $1K for full 1080p panels with more than adequate bells and whistles. Panic is setting in at panel vendors.

The only way out of this was a higher resolution but 4MP wasn’t enough of a step up from 1080p’s 2MP to entice most consumers, especially with no content available in that format. Sure you could scale but it would probably look worse to scale to a non-integer multiple than to just run at a lower rez with the correct pixel count. Worse yet for panel vendors they couldn’t charge a hefty premium for 4MP monitors, it wasn’t worth the money they wanted to buyers.

Enter 4K. It was a definite step up, the 4K monitors SemiAccurate has played with were stunning in every way including price. It was obvious that as soon as they came down to a sane price point they were quite clearly a worthwhile upgrade for anyone equipped with functional eyes. 4K monitors have 8MP, 4x the number in a 1080p/2MP panel and required all sorts of very fast signal processing hardware to work. That was expensive but in two years the price has dropped from $30K to <$5K for decent looking panels. All appeared good unless you actually looked at the specs.

Nice until you notice how broken it is

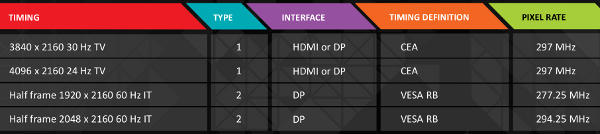

What you may ask is the problem? Easy, look at the standards for 4K listed above specifically the first two. Notice anything amiss? 30Hz? 24Hz? What decade is this again? Do people really want refresh rates standardized on before WWII? Like flicker and stutter? If so, the first two standards are for you but beware they don’t ship with crates of aspirin. Worse yet those are the affordable monitors that do support acceptable refresh rates are still painfully expensive.

Not to be a downer but price was the least of the problems for 4K, just look at the last two standards listed above. Notice the part about half frames? Any guesses what that means? If you are thinking two cables you would be right on. Two cables means you could get a signal on the left when it was meant to be on the right or the other way around.

While this is easy enough to configure if you know your way around PCs, for consumer electronics it is a non-starter. If you have ever walked your grandmother through setting up an AV system over the phone you know what a nightmare it is doing the easy stuff. Imagine doing the same with two cables and configuring it to display right added to the mix. Then add in doing it not on a PC but on devices with different features, UIs, and remotes. It is a lost cause.

To make things easier for the consumer we come to the tiny problem of the HDMI and DP standards war. HDMI wants to get their fingers in to the pie and charge everyone a royalty for something or other, DP wants a standard that works and doesn’t charge the $1 or so a port that HDMI asks. Stalemate. Both sides argue, little goes forward, and the broken state of affairs that we are in now goes on with two cables and messy configuration for the average person.

As things stagnate panel prices continue on their 30% or so a year free fall and panel makers panic more and more. DP1.2 can do the signal rates that 4K panels need on one cable, in fact it can do more because it will support at least three monitors over a single cable. The aggregate pixel rate on the port is more than enough to push a 4K signal but how do you decide which signal is left and which is right? DP has a perfectly functional way to get the data back, USB among other signaling methods, but there is no defined syntax for such functionality. Those tasked with doing such things are in the midst of petty squabbling and are getting nothing done but doing it slowly. In fact when they are not squabbling these committees tend to take years to do minor things so don’t expect them to have a fix for the 4K setup problems in a relevant number of years.

This stalemate is not just hurting panel makers but PC makers too. GPU vendors are at the point where even a low-end GPU can push a 1080p panel at reasonable frame rates in any game, so how do they convince PC users to upgrade? It is getting to panic time in GPU land too and the HDMI and DP crew are about as likely to lend a hand as they are to stop squabbling. This is the long way of SemiAccurate recommending you don’t hold your breath waiting for it to happen.

Enter AMD and the standard that is now VESA Display ID v1.3. Sensing a stalemate that would, not could, go on for years, AMD made a standard and gave it to VESA. See stalemate. See stalemate end. See progress. See HDMI and DP stupidity that hurts users carry on as usual but luckily that is tangential to this standard. In any case it solves the 4K tiling problem and on the DP side the 4K over a single cable problem at the same time. Yay for benign autocracies and progress.

What does VESA Display ID v1.3 (DID) bring to the table? First is a Tiled Display Topology Data Block that can communicate what the topology of a tiled display is and what the capabilities of both ends of the cable are. That includes identifying the display as one capable of actually displaying tiles, associate the different streams on a DP cable with a specific tile, describe said tiled topology to the other end, describe the location of each tile in the topology to the other end, and rather unexpectedly describe the bezel locations and sizes.

If these things seem like painfully basic things for a digital display to be able to communicate to a video source, well they are. If these things seem like they should have been in the first digital spec from day one or before, yes on that too. Unfortunately the state of standards bodies and their usual method of rules setting by hidden ulterior motive precluded this years ago. In fact it precluded it long past the point when it was critically needed and all the relevant players were feeling severe pain from its absence. Welcome to high-tech standards bodies. Luckily for us AMD just stepped up and did it.

On top of that if you have an AMD card that is Eyefinity capable, that would be just about all of them in the HD6000, HD7000, HD8000, and Rx lines, there are more goodies in store for you. AMD can use the Tiled Display Topology Data Block to do plug and play Eyefinity configuration. Better yet this will allow future tiled displays to be autoconfigured without the current monitor specific driver patches that make things miserable now. In general things should just work.

If you are looking for a decent 4K experience, any AMD video card that is an HD7000 or higher device should support DID and 4K monitors that are compatible with it. Before you ask we are not sure if there are any of these that exist yet but given how it is basically a minor firmware update it shouldn’t be long before they are all over the place. About time. VESA Display ID v1.3 would have been useful five years ago but it is still welcome now and we have AMD to thank for it being here instead of a proposed spec for 2019.S|A

Note: We know, we know but thanks to moronically divided embargo dates we can’t say that yet.

Have you signed up for our newsletter yet?

Did you know that you can access all our past subscription-only articles with a simple Student Membership for 100 USD per year? If you want in-depth analysis and exclusive exclusives, we don’t make the news, we just report it so there is no guarantee when exclusives are added to the Professional level but that’s where you’ll find the deep dive analysis.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024