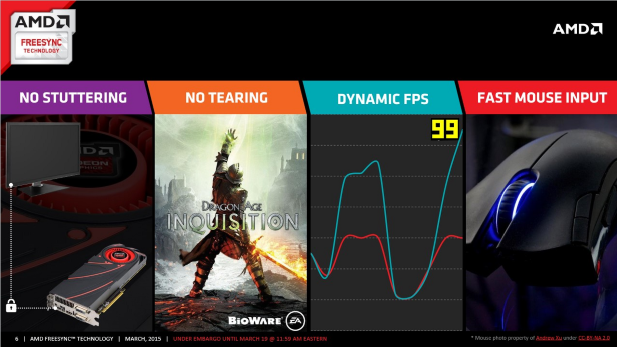

Yesterday AMD released the Catalyst 15.3 Beta which is its first driver to offer public support for its FreeSync adaptive refresh rate technology. For those of you that have given up on trying to keep track of all these product names FreeSync is AMD’s counter to Nvidia’s G-Sync technology which launched last year. What FreeSync and G-Sync both enable is the display of new frames on the monitor at the same rate that they’re rendered by the GPU; rather than at the rate that the monitor can display them. This means no more stuttering or screen tearing caused by a miss match between the GPUs ability to produce new frames and the monitor’s refresh rate.

Yesterday AMD released the Catalyst 15.3 Beta which is its first driver to offer public support for its FreeSync adaptive refresh rate technology. For those of you that have given up on trying to keep track of all these product names FreeSync is AMD’s counter to Nvidia’s G-Sync technology which launched last year. What FreeSync and G-Sync both enable is the display of new frames on the monitor at the same rate that they’re rendered by the GPU; rather than at the rate that the monitor can display them. This means no more stuttering or screen tearing caused by a miss match between the GPUs ability to produce new frames and the monitor’s refresh rate.

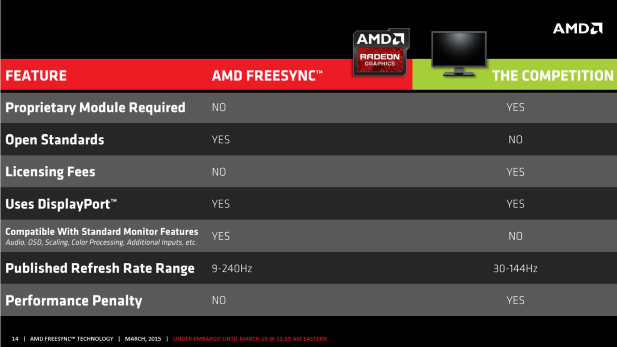

But unlike Nvidia’s G-Sync which is a separate hardware module, in addition to the monitor’s video scaler, that display makers have to purchase and sign a licensing agreement with Nvidia to sell to consumers, AMD is charging nothing for FreeSync. Monitor manufactures need only to use a video scalar chip from MStar, Novatek or Realtek to offer support for FreeSync. AMD is not charging any licensing fees to hardware manufactures for Freesync or selling Freesync modules to OEMs. The effect of this strategy is that the initial batch of FreeSync capable monitors are cheaper than comparable G-Sync enabled monitors.

But unlike Nvidia’s G-Sync which is a separate hardware module, in addition to the monitor’s video scaler, that display makers have to purchase and sign a licensing agreement with Nvidia to sell to consumers, AMD is charging nothing for FreeSync. Monitor manufactures need only to use a video scalar chip from MStar, Novatek or Realtek to offer support for FreeSync. AMD is not charging any licensing fees to hardware manufactures for Freesync or selling Freesync modules to OEMs. The effect of this strategy is that the initial batch of FreeSync capable monitors are cheaper than comparable G-Sync enabled monitors.

FreeSync also offers a big implementation advantage over G-Sync in that while G-Sync is limited to work over DisplayPort connections only, FreeSync is compatible with VGA, DVI, and HDMI in addition to DisplayPort connections. This is because FreeSync is integrated into the video scaler on the monitor, and thus preserves all of the scaler’s functionality, while G-Sync is a module that is installed in addition to the video scaler and overrides parts of its functionality.

FreeSync is also marginally faster than G-Sync, about one to five percent, because of how manages the synchronization between the monitor and the GPU. Where as G-Sync asks the display if its ready for the next frame, FreeSync grabs the display’s maximum and minimum refresh rates when it’s initially connected and then supplies a frame rate within that range without asking the monitor for permission every time it submits a new frame. This removes the overhead of that handshake and improves performance just a tad.

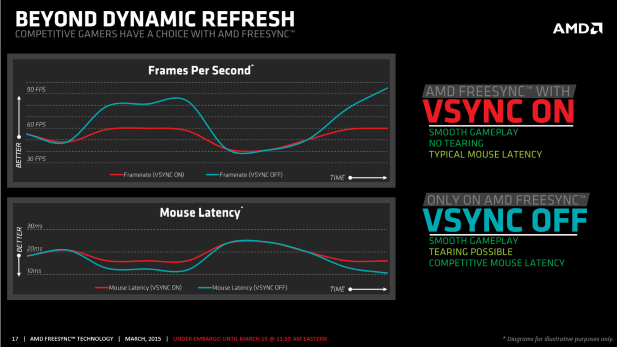

AMD is also trying to win points with the competitive video gaming market by allowing users to chose whether or not Vsync is enabled when using FreeSync. This comes in contrast to G-Sync which forces the use of Vsync. The singular advantage of turning Vsync off is that it reduces input latency by allowing you GPU to render frames above the display’s maximum refresh rate. This re-introduces the potential for screen tearing, but it reduces input latency and retains FreeSync’s no stuttering policy; leaving users with a trade-off to consider.

On the down side AMD’s current driver does not support the use of FreeSync for stereoscopic display (3D) or CrossFire configurations. But it does offer Eyefinity support and a driver with CrossFire support coming next month.

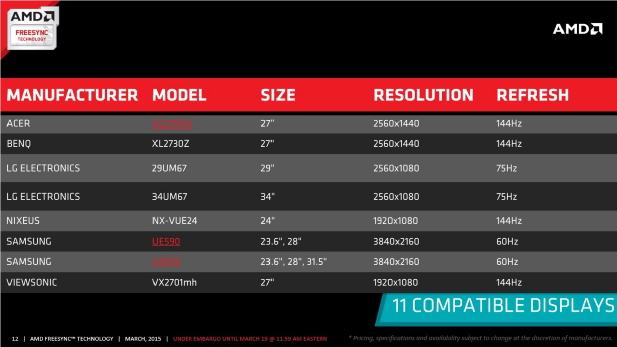

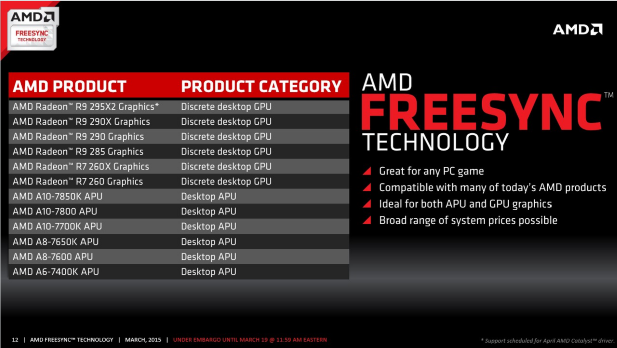

As of today there are 11 FreeSync compatible displays on the market with more set to arrive in the near future. AMD has even managed to muster a pair 21:9 aspect ratio ultra wide displays which is something that Nvidia can’t lay a claim to. More interestingly though AMD is only offering support for FreeSync on it Graphics Core Next version 1.2 and up GPUs. This means that the R9 290 series, R9 285 series, R7 260 series, and even AMD’s Kaveri-based APUs are supported. But older GCN GPUs like the HD 7970 and the R9 280 series and R9 270 series graphics cards do not support FreeSync.

AMD’s FreeSync is a potent counter to Nvidia’s G-Sync that not only bring support for adaptive refresh rates to the AMD’s users but all advances the state of the art. FreeSync sets a new bar on price, performance, and accessibility that Nvidia’s going to have to work hard with G-Sync to match. If anything FressSync is a perfect example of AMD’s continuing low-cost, standards-based philosophy towards new graphics technologies. FreeSync’s got a lot to offer and no matter whose GPUs you use the spread of adaptive refresh rate technology is something we can all get behind.S|A

Thomas Ryan

Latest posts by Thomas Ryan (see all)

- Intel’s Core i7-8700K: A Review - Oct 5, 2017

- Raijintek’s Thetis Window: A Case Review - Sep 28, 2017

- Intel’s Core i9-7980XE: A Review - Sep 25, 2017

- AMD’s Ryzen Pro and Ryzen Threadripper 1900X Come to Market - Aug 31, 2017

- Intel’s Core i9-7900X: A Review - Aug 24, 2017