![]() A few weeks ago, ARM put out their new CPU/GPU compiler suite called Development Studio 5 (DS5). All of the components look interesting, but the system analyzer looks like one of the best out there for GPGPU type work.

A few weeks ago, ARM put out their new CPU/GPU compiler suite called Development Studio 5 (DS5). All of the components look interesting, but the system analyzer looks like one of the best out there for GPGPU type work.

DS5 itself is a full suite, built on Eclipse, and available as a stand-alone product or plugins for what you are using now. It come with a compiler, debugger, system analyzer, and even a complete ARM CPU simulator. There are three packages ranging from the free Community to the high end Professional Edition, and thankfully it runs natively on Linux. Yay progress.

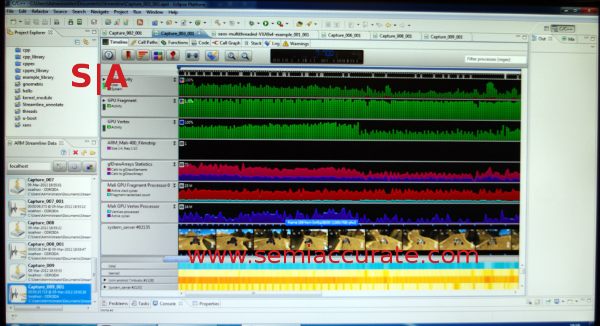

The big addition to DS5 was folding the Mali GPU debugger and system analyzer into the main product, with both showing a level of polish not often seen in products like this. Instead of treating the GPU as something between a black box (black hole?) filled with evil and random number generators, it lets you do useful things with combined GPU and CPU programming. Take a look at the screenshot from the ARM GDC booth.

Note the pictures near the bottom

DS5 does just about everything you would see in a normal analyzer like this, you can put up graphs of all the normal CPU usage bit, and put it up beside a host of GPU calls. You can view traces by hardware, task, and all the usual variants, or write your own if you are feeling bored enough. Since DS5 is eclipse based, someone has probably already done it though, so just drag and drop.

The GPU traces also include many OpenGL calls rather than simple hardware counters, and that looks to be much more useful on such a massively parallel device. Do you want to know what ALU is being used, or which OpenGL functions are causing the performance stutter? Combining this with extensive heatmap views, especially per process, is quite illuminating for game debugging.

One of the most useful things added to DS5, and to me it appears to be borderline revolutionary, is the filmstrip between the graphs and heatmaps in the screenshot above. It simply takes a thumbnail screenshot of running process and slaps it up in sync with the traces of the performance at that time. If you are having a GPU performance hiccup every so often, is it easier to figure it out what is going on with numbers and code, or just look at the screen to see what is in those particular frames?

The days of going back and forth between traces, code, and videos may not be over, but they are made one hell of a lot easier by this feature. Most of DS5’s counters are fairly light on overhead, but the filmstrip does add enough I/O that you might not want to keep it running 24/7. Then again, given the cost of figuring out what it can give you with a sideways glance the ‘old way’, it might not be a bad trade-off. If everyone doesn’t copy, ummm, independently develop similar but not the same technology to the filmstrip soon, I will be shocked.

DS5 works on Android and Linux targets, and uses a combination of hardware and software on the target platform to get the job done. The suite recognizes all ARM cores, but also a long list of specific SoCs and development board types as well. For the moment, DS5 is weakly linked to the OS and programs being looked at, but future iterations are being developed with everything baked in to the drivers themselves.

In general, DS5 looks to be a pretty nice step forward, but the performance analyzer takes things a step farther. It adds enough new features to make the upcoming GPGPU programming tasks far less pain than they are now. If this doesn’t seem important, think about how many CPUs today do not come with an integrated GPU, and how much of a pain they are to do anything with. Then think about things like this and this. The future is compute with an integrated GPU, and DS5 is one of the first tools I have seen that takes some of the pain in getting there away.S|A

Editors note: You can learn more about this type of material at AFDS 2012. More articles of this type can be found on SemiAccurate’s AFDS 2012 links page.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024