Intel released four new drives last week, two families of two enterprise SSDs each. One of these features 3D NAND and the other a new interface.

Intel released four new drives last week, two families of two enterprise SSDs each. One of these features 3D NAND and the other a new interface.

The new drives in question are all SSDs, all PCIe/NVMe, and all aimed at enterprises rather than consumers. Starting with the lower end P3320 and P3520 we get the addition of 3D NAND to the tried and true Intel NVMe drives in both 2.5″ and PCIe form factors. No matter which form factor you use the interface is the same, PCIe 4x.

As we mentioned earlier the big difference between the P3320 and it’s SATA predecessor the S3510 is the 3D NAND arrays, currently MLC with TLC versions coming “later this year”. Normally TLC NAND would make SemiAccurate run away screaming, “don’t buy this you fool” but this is not a consumer drive and 3D NAND makes a big difference.

If you understand how 3D NAND works, it is very different from normal NAND especially in factors that reflect endurance. Because of this, and because these drives are aimed at enterprise, we feel Intel is aware of and has mitigated the endurance risks associated with TLC. We probably wouldn’t make the same statement for other companies, you would see us running away screaming for their products.

As is normal with product updates like this, Intel is claiming that the P3320 cuts latency by about 4x over the SATA based S3510 but the interface change has a lot to do with that gain. Similarly IOPS go up by at least as much, a notably wider and lower latency PCIe interface will do that. Intel is not giving out raw performance numbers for either drive yet so, well, sit tight.

More interesting is the bigger brothers called D3600 and D3700, the D stands for datacenter. These devices are aimed squarely at the SAS drive market and offer the one must have feature unique to SAS, dual porting. Better yet this is true dual porting also known as active/active. As the name suggests this means you can use the D3x00 line from two machines at once although you will split the bandwidth between the two hosts.

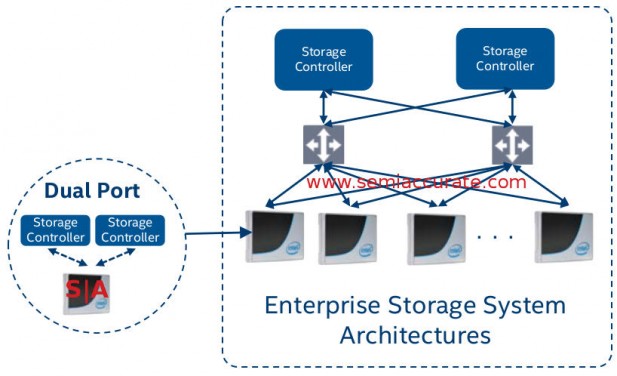

If you are running serious enterprise applications on a real array, dual controllers are a must not an option. Because of dual ports SAS ruled that arena, even NVMe drives with PCIe capable of non-transparent bridging isn’t enough because it solves the drive redundancy problem, not controller redundancy. Besides it is still one box, where dual porting comes in is drive arrays with multiple hosts, even things like Avago’s PEX9700 switch doesn’t cut it if the drive is not aware of multiple masters. This is why Intel needed to do an active/active dual port drive, a large and lucrative segment of the market simply needs it.

Both the D3600 and D3700 are 2.5″ form factor drives, and both likely use an SFF8639 connection to pass 4x PCIe3 lanes. These lanes can all be used by one controller or split between two as two PCIe3 2x connections. If you want it in pictures, the net result looks like this.

Dual porting looks like it sounds

The major difference between the two drives is shown by the capacity, the D3700 cones in 800GB and 1.6TB capacities while the D3600 is available as a 1TB or 2TB device. The lower capacity of the higher end drive is nicely explained by redundancy, the D3700 has much more of it. Physically both probably have the same raw NAND capacity but the D3700 puts more aside for endurance and failure tolerance, a common tactic in enterprise devices. This also means the D3700 is more of a write biased drive vs the D3600.

As you would expect both drives throughly trounce SAS, even a 16Gbps SAS interface has half the throughput of a 4x PCIe3 connection. Likewise the PCIe stack is a lot quicker to traverse than a PCIe connected SAS card, something that shows up as a commanding latency lead for the new devices. Throughput also puts SAS devices to shame and the gap grows with queue depth but is always a large multiple.

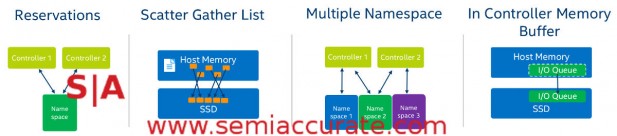

How do the new twins get their speed? Intel lists four main ways of getting the performance out of the drives, reservations, scatter gather lists, multiple namespaces, and in controller buffers. All do different things but have one goal based on the multi-user, multi-tenant, multi-threaded world of enterprise apps, having a lot of outstanding requests. All four advances are aimed at making such multiple request scenarios more efficient in various ways and to eliminate bottlenecks.

Four advances for four drives

Reservations are simple, they are just a method of allocating who can write to a device at any given time. This prevents thrashing at the cost of a little higher latency in some cases, not the end of the world. In exchange for this delay each write takes less time so for multiple writes, they all get done faster. Reservations eliminate overhead.

Next up is quite interesting, a scatter/gather list in host memory rather than on the driver or controller. If there are a lot of little writes the driver on the host will bunch them up and send them as one I/O operation saving tons of overhead versus a lot of little operations. This can improve throughput massively in corner cases like database work and little obscure jobs like that. Don’t underestimate scatter/gather lists, they can make a massive difference for several important job types.

Next up is multiple namespaces per device, and it does just what it sounds like. Instead of a drive being seen as a single drive, it can be sen as multiple drives or have different names for each host. This is quite useful if you have multiple controllers hooked up to multiple drives. In a two host, two drive scenario each drive can see the directly connected device as “local drive” and the remote drive as “remote drive”. This will allow you to do lots of failover and redundancy tricks without flooding both drives with extraneous requests. Storage architects love this kind of feature.

Last up is the moving of the buffer from host memory to the drive controller itself. This adds quite a bit of cost to the controller because enterprise level buffers tend to be pretty big, but it is worth the cost. Instead of an I/O queue request needing to traverse the SAS and/or PCIe busses, they are now kept locally. It doesn’t take a genius to realize that this will drop latency by large chunks, and that is exactly what Intel is claiming for the D3x00 lines.

So in the end there are four new drives, two iterative ones that sport 3D NAND and two new ones that are dual ported PCIe/NVMe. All are enterprise focused, all are high-end devices, and all are serious advances over their SATA or SAS predecessors. Given the large multiple gains in performance both lines offer there doesn’t seem to be any reason to stick with the old ways, and that is a really good thing.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024