Anyone watching the industry knows AMD (NYSE:AMD) and ARM (NASDAQ:ARMH) are up to something together, it is more obvious than two teen-agers giggling when they glance at each other. What that something is is not what anyone expects though.

Anyone watching the industry knows AMD (NYSE:AMD) and ARM (NASDAQ:ARMH) are up to something together, it is more obvious than two teen-agers giggling when they glance at each other. What that something is is not what anyone expects though.

At AFDS/Fusion 11, both AMD and ARM said it, and said it explicitly, but no one seems to have noticed. That is a pity because what they are doing is really cool. No, I would go as far as to say it is technically brilliant and also the only thing that they could have done. What is this wondrous thing? Take a look at these slides from AFDS, the first four are from Phil Rogers’ Keynote, the last two are from Jem Davies’ later in the same day.

If you read these slides together and still don’t get it, hold still, I have a hammer^h^h^h^h^h^heducation rod that I am glad to help you over the head with. Just cover travel expenses, and this form of consulting and gene pool upgrading is free. Act now. While I am waiting for the checks to clear, I can explain things in a slightly less, umm, impact-ful, way.

Look at the fist slide, the one about FSA and Open Platforms. Think about that, open platforms abound now, you could say that AMD’s current Hypertransport system is one of those, and others have similar plans. ARM itself is very open, as long as you are a licensee.

The ‘Fusion’ concept is not about platforms in the sense of things stuck on the motherboard, it is about the exact opposite, things stuck on a die with a bit of careful sub-micron welding. An open platform in this case would mean that the interfaces ON the chip itself, not TO the chip, are open. This is key.

The second bullet, ISA agnostic, and the third, or at least the hardware partners part, are the other relevant pieces. Why? Think about it, why would AMD need to be hardware agnostic? They have one set of hardware interfaces, and they control both sides, right? Why would hardware partners care about FSA if AMD meant off-chip? There is this little thing called PCIe, and it works more than well enough, especially with the 3.0 spec additions. On chip, they would be in bed with AMD enough to make designing for whatever spec AMD presented rather trivial.

Should there be any little bits to paper over in early hardware, that is what the next slide, and the next technology, FSAIL, is for. With luck, it won’t be needed in a generation or two, but the first spin or three of some hardware may need it. Think of it like a BIOS patch for future Fusion architectures.

On the next slide, AMD says the finalizer can re-order memory. Why would you need this? AMD CPUs are all bound by the x86 spec for memory ordering, broken, backward and painful as that may be. The ATI GPUs may use a different scheme, but that is an easily fixable problem, and AMD has explicitly said that this will change in Southern Islands, coming in a few months. For an all ATI design, this requirement is silly.

If ARM uses a different model, they will be consistent with their IP partners too, so this is a pointless spec, right? Unless you want to to mix components made for the two architectures, then it would be necessary. But mixing and matching components for both sides can’t happen, they are two different ISAs, and…. oh, first slide, bullet 2. Hmmmm……

But that would not be necessary if you were making a chip, you could just put a few gates in to flip the memory order with very low latency, right? Unless you wanted to make the whole scheme to be software agnostic, but software tends to be for a platform, if not a specific chip, right? The only reason for this would be to run low level software on multiple disparate platforms, something that is madness. Oh, bullet point 1, C++ and higher level language compatibility. Gotcha.

Then there is the final one of Phil Roger’s slides, the future directions one. It simply screams “we are going to open up our chip”. How much more direct do you need?

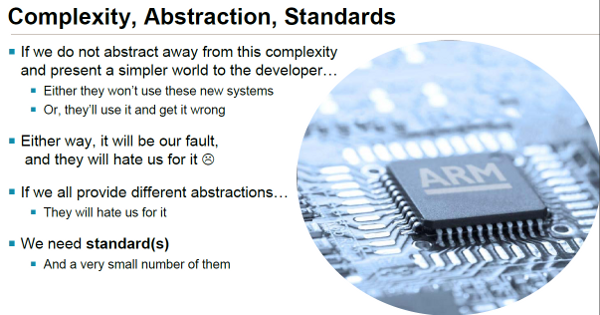

If that isn’t enough, look at the first one of the ARM slides, the one about memory model consistency. If you have an IP block for ARM CPUs that need very low level and granular access to memory, particularly in a low latency but consistent manner, you can design it easily. ARM will be more than happy to document their scheme to any partner that needs it, it isn’t top secret in any way.

Now why would ARM need to worry about such things if all their partners have one target, and only one target, the ARM core itself, to write to? Their GPUs also don’t need to talk to multiple memory models either. The only reason you would need this is if you wanted to mix and match, on the same chip, IP blocks from two or more different companies with disparate memory models. But something like that would be madness, the memory models between ARM and x86 are….. hey, wait a minute……

And then there is the last point on the last slide, standards. To quote the slide, “We need standard(s) – And a very small number of them.” In this case, two is probably too many, one is just about right. Silicon takes a while to make, much less standardize, so if there were only one standard in the making, the current and near future chips wouldn’t work, and no one would write software for them until they had a huge installed base.

No one would be crazy enough to preemptively write code for that kind of future model until it arrived. The whole concept is DOA. To make it work, and to get people interested, you would need something to paper it all over, and bridge the gap. It would have to be a low latency shim to flip bits here and there, like for memory ordering. It would have to be almost a virtual ISA for parallel programs where the software is ‘finalized’ to an ISA by a JIT compiler or ‘Finalizer’. Madness I tell you, it will never hap… hap…. Second slide, top bullet you say?

In summary, while neither side has explicitly said this directly, it is painfully clear that AMD and ARM are going to make a common on-chip interface and interconnection. You can take a Bobcat chip, yank the GPU, and slap a Mali or Imagination block in. Want a quad-core A15 that runs ATI demos? A shoelace tip controller that bootstraps a 6970? How about a Bulldozer or Trinity that uses that custom accelerator block that Google or Facebook is probably working on? Crypto blocks that are common to ARM and AMD? No problem. Get the picture now?

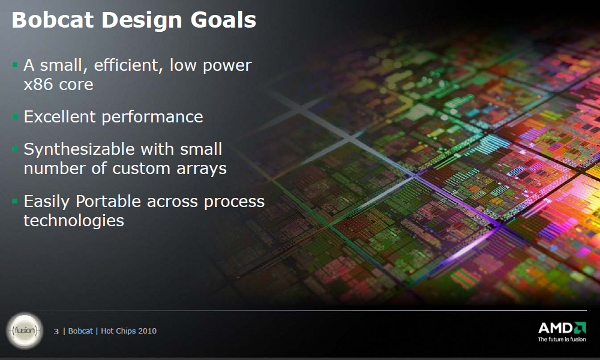

There is one other problem here, ARM cores and IP are the easy part. They are synthesizable with a small number of custom arrays and easily portable across process technologies. You can make them just about anywhere, and you can make them with a lot of different tools. You can’t do this with AMD CPUs, they are just the opposite, Right?

To make this whole crazy scheme workable, you would need an AMD core that is synthesizable with a small number of custom arrays, and it needs to be easily portable across process technologies. This is a non-negotiable point, it must not just be in place, it needs to be in place long long before you expect to talk to customers and partners, much less expect silicon on the market. Long long before means you need to start designing the core with these goals in mind years before you see any fruit from the project.

Anyone remember the very last talk at Hot Chips 22? Anyone remember slide 3, reproduced below?

Zing! Score!

If the above explanations aren’t clear enough, there are three choices open to you. First, you can go watch the keynotes, click here and sign up. If that doesn’t do it, you can pay me lots of money to come out and explain it to you, I do consult for a number of large, and small, companies. Barring that, for a lot less money, I can bring the SemiAccurate Rod of Reeducation, affectionately called ‘Thud’ for reasons I can’t figure out, to your location. Call now, operators are standing by. This limited time offer will close when the first inter-mixable silicon hits.S|A

Note: If there is anyone who thinks Dirk Meyer didn’t have a consumer electronics strategy, or that it wasn’t in place long ago, well, you were wrong.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024