Intel (NASDAQ:INTC) is launching a new baby Atom today called Medfield, and the hardware looks really good. The problem is that the software it runs remains, at best, an open question.

Intel (NASDAQ:INTC) is launching a new baby Atom today called Medfield, and the hardware looks really good. The problem is that the software it runs remains, at best, an open question.

Lets look at the hardware first, the chip itself is called Penwell, one that was originally intended for a specific customer, but has had it’s focus broadened a bit since. That’s water under the Manchurian CEO’s new yacht now, and Intel is moving on to bigger and better things. In a shocking twist, it looks like after several years and many more false starts, Intel will actually have an customer that puts Atom in phones! Actually, there are two initial customers, Lenovo and Motorola, both solid wins for Intel.

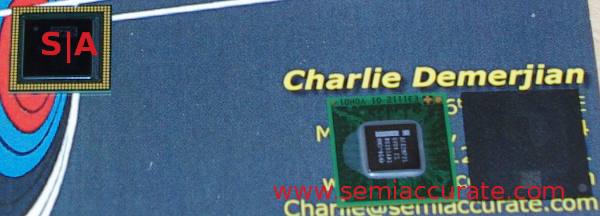

Medfield, Moorestown, and Moorestown’s chipset

One of the most interesting things about the new CPU, or the new SoC to be more accurate is the pads along the rim of the carrier. If you look at the picture above, you can see Penwell on the top left, and Moorestown alongside the Moorestown chipset on the right. They are sitting on the author’s business card. Those pads on Medfield are for die stacking, in this case for memory.

The die stack shown at the briefing had the Medfield package on the bottom, a multi-layer carrier with TSVs on top touching the die, likely for heat dissipation, and a stack of memory on top. That memory stack is wire bonded in the standard way. The top has a heat spreader/physical protection, then some whipped cream, chocolate sprinkles, and a cherry.

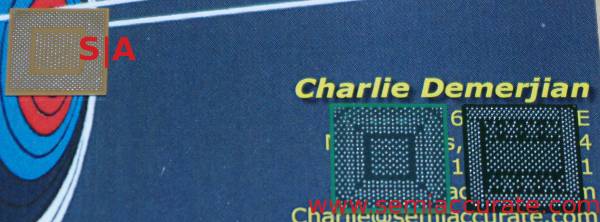

Same chips, other side

If you look at the bottom, there is one very interesting bit, look at the pin count. Medfield has fewer pins than Moorestown, and far far less than the two chips combined. While most of this is from the DRAM connections that are not needed, there are probably a few saved by dropping the TDP of the chip. Also, going from one socket to two, and one that looks to be a bit smaller than Moorestown, saves a lot of board space.

The SoC itself, called Penwell, uses the Saltwell CPU, part of the Medfield family. We will use them somewhat interchangeably in the article, mainly because at the moment, there is only one Medfield based part announced. Same for Saltwell. In any case, this is trio of code names describes the first mobile Atom CPU that is based on a 32nm process.

The first mobile Atom, Silverthorne, and the successor Moorestown/Lincroft, were both 45nm based, but from our recollection, done on completely different 45nm processes. If memory serves, Silverthorne was made on the same bog-standard Intel 45nm HKMG process that mainstream CPUs are made on. Moorestown was done on a 45nm LP process, which is not the same as the mainstream 45nm.

Atom stayed on 45nm (-LP) for many more years than you would expect because there was no 32nm-LP process to move it to. Now that there is, we get a 32nm mobile Atom, and Intel has promised to close the gap between mainstream and -LP process introductions in the future. It is quite interesting that Intel sees 32-LP as more appropriate for mobile Atoms than 22nm, but there is unlikely to be a single factor as to why. This simple bullet point is backed up by so many potential tradeoffs, arguments, and tests that it would fill multiple books to cover it all.

In any case, the 32nm mobile Atom SoCs are upon us, both with and without sprinkles, but the whipped cream is mandatory as it should be. How big is it? Intel would not say directly, the only number they would give is that it is a 144mm^2 Co-PoP SoC, 12x12x.73mm, not counting whipped cream or optional cherry. If you measure with the top pads, our initial ballpark estimate was 80mm^2. As it turns out, this is very close to the 82mm^2 that people with expensive measuring equipment tell us the SoC occupies, but don’t tell Intel that we know.

Intel claims the Saltwell core will consume 43% less dynamic power at the same frequency as the older Moorestown, or hit 37% higher frequencies. For a phone oriented device, the most important claim is 10x lower leakage, presumably per transistor, than the lowest leakage 45nm device. The slide shown was vague though, in places referring to 32-LP and plain 32 in others, but we don’t think the number is out of line in either case.

The CPU core itself is not all that different from the older 45nm parts, but the details have been comprehensively reworked with a focus on low power. On the performance side, the Gshare branch predictor went from 4K to 8K entries single threaded, 4K per thread with two threads running. Memory copy is improved, as were a bunch of unnamed microcode and scheduling bottlenecks. These should all improve performance, or more likely stop certain performance problems from happening.

For power savings, there were many more changes including an all new L2 cache. It has a specific circuit design made for the lowest possible power use while maintaining a 1:1 clock ratio with the core, and is on a separate voltage rail. This allows the CPU to drop voltage below the Vmin needed by the L2 SRAM. It can also be fully power gated in lower sleep states, or kept awake when the core sleeps if needed.

Dynamic power has also been addressed in many areas, large and small. Loop Stream Detection has been added to Saltwell, and ‘significant’ PLL power savings have been found. Basically, everything has been power gated more granularly, things go to lower sleep states faster, and more things can be independently C6 power gated. One interesting exception is that the Time Stamp Counter (TSC) and Local APIC Timers are now always running. It is interesting because the addition makes us wonder how previous Atoms functioned without them.

In a shock to no one, Intel has a chart that shows the usual power/performance curve that we can’t reproduce here. The lowest power state called C6_S0i0/i1 has everything possible turned off, PLLs are off, and all the caches flushed. It runs at, err, zero MHz, and consumes about 1mW. Intel says the exit latency to full speed operation is about 70µs. This is about as low as you can go without pulling the battery, a state that Intel calls SoC S0i2/i3 for reasons that defy logic. You will be forgiven if you slip up and call this “off” instead, but it does save 1mW of power.

Ratcheting up power draw, you next go to C4, basically L1 caches awake, the rest sleep. This draws about 18mW and takes about 25µs to wake up from. C2 and C2E are similar, but cache snoops can wake the CPU core up in both. The wake from C2 sees the CPU running at full speed and C2E wakes it up to minimum speeds. Interrupts are blocked in either case, and wake times drop to 3.7µs in both. C2 consumes 80mW and the E saves 13mW from that, the power use is mainly because caches are not flushed at C2E and above wake states.

C1 and C1E keep the core awake but HALTed, and most clocks are off. C1 doesn’t drop the main clocks while E mandates minimum frequency and VID. They also both wake the CPU to full or low clock like their C2 analogs. This state curiously burns the same power, 80 and 57mW as C2/C2E but the exit latency is 350ns, 10x faster than the lower C2 states.

The last two states are C0 HFM and LFM, High Frequency Mode and Low Frequency Mode respectively. In case it isn’t obvious, they both have everything on, nothing has to be gated, and nothing flushed. At a hypothetical 600MHz LFM, Penwell is said to consume 178mW, 292mW at 900MHz HFM. The core itself is said to clock at around 1.3GHz max with a turbo good for up to 1.6Ghz. It pulls ~500mW and ~750mW respectively in these states. If you add in the optional 100MHz Ultra-LFM mode, Penwell runs at 100MHz while pulling ~50mW.

If you graph all of the above, you get a nice asymptotically converging line familiar to anyone who has taken basic algebra. We were not allowed to take pictures of it to post, so close your eyes and dream about frequency vs power graphs with the axis in classic black, the line itself in purple.

In the end, you have an fairly standard looking Atom with incrementally better everything and notably better power use. Saltwell itself is ISA compatible with Core2 generation mainstream x86 CPUs just like the older Atoms. It has has SSE3, SSSE3, but no SSSSE3 because we just made that last one up. It does have AMD64 instructions, but there is no word if they will be enabled or arbitrarily fused off like every previous Atom product.

The front end has a 32K Icache with pre-decode bits, and the normal 2 instructions per cycle decode. There are two 16-entry scheduling queues, one per thread, and the scheduler can pick 2 ops, both from one queue, per clock. This dual fetch is because the chip has a dual issue pipeline so it can execute both in a clock. Everything else seems unchanged, or unchanged in minor ways. L2 is still 512K, and there are two AGUs

The construction of the package is interesting, as is the process, but the Saltwell core is basically a minor tweak on the last two generations. Not much exciting there, but that changes radically when we move past the core and look at the Penwell SoC.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024