![]() After years of anticipation, Intel is finally giving us more information about the Larrabee/Knights Corner/Phi architecture. Pieces of this multi-multi-core architecture is going to form much of Intel’s future, so let’s take a look at the details.

After years of anticipation, Intel is finally giving us more information about the Larrabee/Knights Corner/Phi architecture. Pieces of this multi-multi-core architecture is going to form much of Intel’s future, so let’s take a look at the details.

When SemiAccurate’s writers first got wind of what is now Phi, it was early 2006 and the chip was called Larrabee. Back then, it was aimed at high performance graphics, and the first Larrabee was billed as a GPU when it debuted at IDF in 2009. It then went from a graphics chip to a soap opera, with the first version being canned mere months later, the second stillborn, and the third stumbled forward on shaky legs. What we thought was the third generation GPU instead had the display controllers removed, many things tweaked, and that turned in to a new HPC chip. Other than a moronic name, the concept was quite interesting.

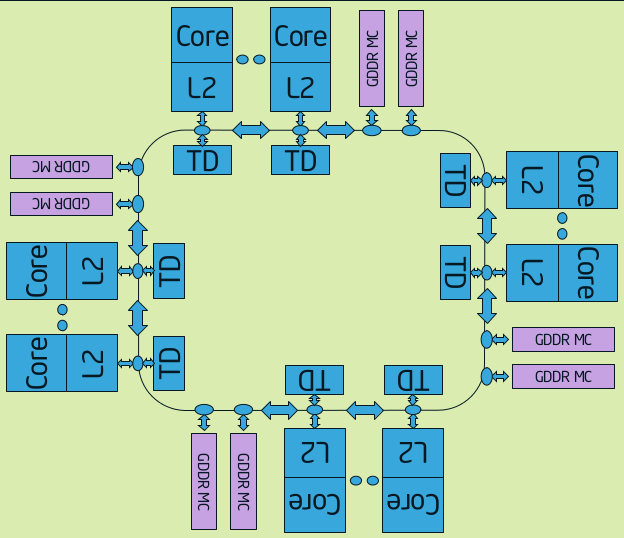

Brief history lesson aside, what is Knights Corner (KC), other than the future of Intel architectures? If you think of it as a Linux cluster on a chip, you would be about right. From a diagrammatic point of view, it is an unspecified (64, but don’t tell Intel we know) number of small 4-thread in-order execution x86 cores each with a 512-bit vector unit bolted on to one of the two pipelines. These cores each have their own L2 cache, and they are all connected together via the now standard Intel ring bus. It looks like this.

Rings, CPUs, and memory make Knights corner

This is a simplified version of the diagram, the core counts and the memory controller counts are not specified. If you can imagine four groups of 16 cores plus a pair of memory controllers, you won’t be far off. In principle, this is the same ring structure that Intel made for Beckton, the 8-core Nehalem-EX, but it has some serious modifications to that first iteration. If you are not familiar with that technology, it was covered in detail in the linked Beckton article, and you might want to look it over before you continue on.

KC adds quite a bit to the ring to make it scale, instead of one ring there are ten, five in each direction. Unlike Beckton, KC can send data across the ring once per clock per controller, and there is a very complex controller to keep things running without collisions. The main rings are called BL and they are 64 bytes (512-bits) wide, one in each direction. BL carries data, the narrower AD and AK rings carry address and coherence information. Interestingly, the AD and AK rings were found to limit scaling, something Intel fixed by simply doubling them.

The data carried by AD and AK are basically memory addresses, and both can go from many different sources to many different destinations. Doubling the widths of the rings wouldn’t do much to alleviate the problem because more packets are needed, not larger packets. Intel solved this problem by doubling the number of both AD and AK rings, there are now two of each in each direction or eight in total. This allows scaling to 64 cores, eight memory controllers, and many other supporting ring stops like PCIe controllers. In all, the count of ring stops is in the high double digits, but until Intel releases the numerical details, we don’t know exactly how many.

Most of these stops have a core, it’s associated L2 cache, and the tag directory (TD) at them. As we mentioned earlier, there are also stops for PCIe and GDDR5 controllers, plus a few more likely for housekeeping and background functions. Where Knights Corner really differs from Beckton is the arrangement of the cores and controllers. In KC, a group of memory controllers is physically associated with a fixed number of memory controllers. All cores can access all memory controllers, but this physical proximity can have some advantages for HPC workloads if done correctly.

If you recall, Beckton, Sandy Bridge, and Ivy Bridge architectures each have only one L2 cache. A core has a slice of the cache physically coupled with it, but a core does not load or save to the local slice, the address is hashed and the cache slice to which it is saved is determined by the hash value. A core theoretically hits the farthest L2 slice just as often as it does the local one. KC is just the opposite, the 512KB L2 is private to the core, other cores can not directly use them as a cache. If you didn’t just do a double take, that means there is no less than 32MB of L2 on Knights Corner, quite the staggering number.

Just to confuse you a bit, the TD that is on each core is not private to the core, it is used by everything. Like the name suggests, it is a directory of tags, and each is associated with a fixed portion of the memory space. That said, it has no logical association the core it is connected to other than physical location. This is what the AD and AK rings mainly talk to, and the TD is programmed to send as little data as possible to avoid congestion. If a TD is not tracking the address in question, that memory request simply passes on to the memory controller.

If the TD does have information on the address in question, that information is passed to the appropriate controller where it can be read directly from the L2 that contains the line. This snooping obviously saves a lot of time by avoiding a trip to local memory, or worse yet, across the PCIe bus. Since a large portion of HPC work is done with tile or block based algorithms, if a block is sized right for the L2 caches, the next iteration will very likely be cached at a physically close neighbor. Careful coding can pay off with KC and related architectures, the chip is laid out well for certain types of problems.

The core itself

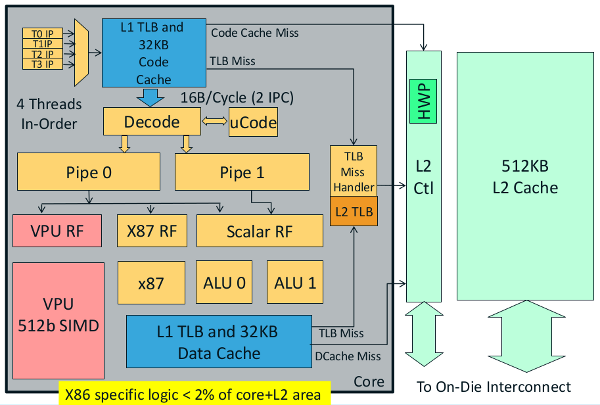

Each core is very similar to the older Intel Pentium, at least from an overview. It is a two pipe in-order superscalar design, but that is where the similarities end. The Pentium didn’t have four thread support, nor did it have a 512-bit vector engine bolted on to one of the pipes. If this doesn’t sound all that impressive, note the bit at the bottom of the diagram where it states, “X86 specific logic <2% of core+L2 area”. Making KC an x86 core rather than a specialized ISA has pretty tiny area drawbacks, especially since the cores do not make up all of the chip. Even with the rumored 500++mm^2 die area, 2% is not much to sacrifice to get free tools and a software base.

These cores are backed by 64KB of L1 cache, split in to 32KB instruction and 32KB data caches. The L2 TLB is 64 entry, and the prefetcher will support 16 streams. This is important in light of one of the most important additions that Larrabee/KC brings to the x86 table, Scatter/Gather instructions. Scatter/Gather (S/G) does roughly what its name suggests, gather allows a programmer to fill a wide data field with chunks from widely varying addresses, scatter does the same in reverse.

S/G is all done with one operation, and can act on up to 16 different locations with non-linear strides in the addresses. This is obviously not just useful, but necessary in light of the 64-byte/512-bit vector engine that needs filling. Alternating through four threads gives the I/O blocks a little time to catch up, but memory can take hundreds or thousands of cycles to return an answer. Both are larger numbers than four if you didn’t catch the math.

To compensate for this, the S/G unit in KC is smart, it has to be. The unit does a little loop using three main step, gather-prime, gather-step, and jump-mask-not-zero. Prime sets things up, step gets as many of the chunks that it can at once, and jump-mask-not-zero sends the memory controller back for another pass if all the elements have not been fetched. That may be simple in concept, but brute forcing things is never very efficient.

The smarts bit comes in when you look at how the caches are organized. Memory is fetched in cache line chunks which hints at the organization of the memory controllers on the ring, and why they are placed like they are. If one part of a gather is in a cache line, that is obviously loaded in the correct slot. If that same cache line contains one or more other chunks of the needed data set, they too are identified, read, and put in place at the same time. This may sound obvious, but it is harder to implement than it sounds. Given the massive amount of reads and writes it saves, this is a critical optimization. Scatter is much easier to do, the locations of the data to be written are known, so there is no searching needed, just a simple check.

There is a long list of other core micro-architectural optimizations other than the scatter gather unit. Some are memory focused like streaming stores, but other are just faster implementations of existing instructions. Intel is claiming 1.8x more performance out of KC on a per cycle basis for single threaded code, usually the worst case. Not only that, the new chip uses memory more efficiently allowing greater scaling and less power used to shuffle bits around for little or no reason.

To save more power, Intel has implemented a bunch of C-states for KC, including one that is not really possible for a mainstream CPU. C0 is full power like in CPUs, core C1 clock gates one core, and core C6 power gates that core but not the L2 cache. Since other cores can snoop the L2, it can’t be powered down until all cores are in at least C1. The ring has the same problem, it can’t go to sleep until everything else does.

That brings us to Package Auto C3 (PC3), a mode that starts when all of the cores are power gated (C6). KC will then clock gate the L2s, rings, and the memory controllers, but not the memory I/O interfaces. Without a ring, TDs, L2s, or accessible memory controllers, the chip can’t do much, but it burns almost no power. PC3 is as far as the chip can take itself, turning itself off would be hard to undo. That said, the host PC can kick off PC6, a mode where everything but the PCIe interface is powered down to retention voltages, and the GDDR5 is put in to self-refresh mode. This essentially turns KC off, and since it can’t really be woken up internally, the host CPU has to wake it up from the outside too.

All of this is interesting, but if you recall, the story started out by calling Knights Corner a Linux cluster on a chip. The cores with private L2s are some of the way to that goal, each one is discrete, and the on-chip ring is a good analog for a network. No token ring jokes please or you will be hit with an ARCnet card. Things really get interesting when you look at how the KC chips talk to the outside world, they use TCP/IP over PCIe.

KC has an OS running on it, in this case it is a single Linux image per chip. Larrabee and Knights Ferry, the architecture that preceded KC, used a BSD based OS. The OS runs can be SSH’d to, and can run code just like a standalone CPU, but most users won’t use it in that way. That OS is presented with an interface that looks like a standard TCP/IP software stack, but there is no reason why it could not be hardware based in the future. If you have multiple KC cards in a server, they tunnel vanilla TCP/IP over PCIe.

In case it isn’t obvious, the standard way to do clustering on a rack of PC servers is with MPI, and that uses TCP/IP to talk between nodes. If you have code that runs on a current Linux cluster, it almost assuredly uses TCP/IP to converse at some level. The chances are good that the servers themselves run an x86 CPU just like KC. If you want to port that code to a system with CPUs and GPUs, you have to code in a proprietary language, use proprietary tools, and program for both x86 and another architecture too. OpenCL changes some of this, but it is still early, and doesn’t address all of these issues.

KC on the other hand doesn’t have those problems because it uses an x86 ISA, plus a proprietary vector unit, and talks standard TCP/IP. The tool chain is the same Intel compiler and tools that you know and likely have used, so the overall bars to hurdle are pretty low. Porting and getting code running is a far cry from optimization, but the starting point is much closer to the goal when using KC. SemiAccurate’s moles who have had KC and Larrabee silicon for a while now confirm that the concept does match their reality, code porting was easier than expected, and it did work about as well as advertised.

In the end, what you have is an unspecified number of cores, each grouped with an unspecified number of memory controllers, all running Linux. The world is reached with TCP/IP, and to the coder, it looks very much like a vanilla x86 cluster that is running MPI. While it isn’t perfect, or even a panacea, it sure beats learning a new architecture every generation or two. Couple this with a 22nm process that is at least a generation ahead of the competition, and you can pack more transistors in to a given power envelope, not to mention die area. Knights Corner may not have the peak numbers of a GPU, but it looks to be far more accessible in the real world than anything that has come before it.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024