The big news for Intel at SC15 is their overarching SSF or Scalable System Framework. If you take 12 bullet points and roll them into one program, you get SSF or what SemiAccurate keeps writing SFF for some reason.

The big news for Intel at SC15 is their overarching SSF or Scalable System Framework. If you take 12 bullet points and roll them into one program, you get SSF or what SemiAccurate keeps writing SFF for some reason.

The idea behind SSF is simple, take the complex task of making a cluster for HPC and use a single framework and compatible set of tools to make the job easy. While making, programming, and tuning an HPC cluster will never actually be easy, Intel is trying to take as much of the pain and repetition out of the mix as possible. Not by coincidence it uses their hardware and software almost exclusively, but single vendor solutions are often the tradeoff for simplicity.

On the hardware side Intel is offering Xeon, Xeon Phi CPUs, and Xeon Phi cards/coprocessors. You can read about the Xeon Phi aka Knights Landing here, and pay close attention to the on package Omnipath option. That is part two of SSF, Omni-Path, True Scale Fabric, and Intel Ethernet silicon. The last six, Lustre, Xpoint memory which we will still not call by its idiotic second marketing name, silicon photonics, HPC System Software Stack, Intel Software Tools, and their Cluster Ready Program. As you can see it covers the whole stack, hardware, interconnects, and software.

A handful of tools is nice and can be useful but as most of you know, they can have a pretty steep learning curve. SSF hopes to combat this with some new bits coming in Q1 namely reference architectures, reference designs, and validation tools. There is a long list of partner companies but providing reference anything is a fine line to walk with 10+ partners. That said if Intel only puts out a robust set of validation tools it will make the job for any architect or implementor much easier, there is a real dearth of such things available at the moment.

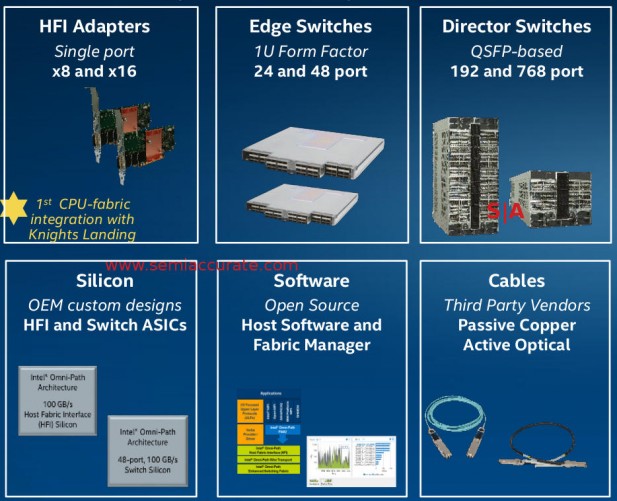

Pieces from NIC to massive switch

Last up is Omnipath, Intel’s high-speed, low latency interconnect. This time around Intel has a complete solution for users from cards to switches, cables to software. As you can see above the product span goes from NICs to full rack scale switches with up to 768 ports, a good starting point for a large-scale cluster. More importantly if you care about latency, and if you are using Omnipath, you do, then switches on this scale are a must because you can’t do multi-hops without inordinate pain.

Last up is a seemingly minor point but one that can bring a lot of pain via high costs to a customer. Omnipath is a proprietary Intel standard with proprietary cables. If you recall the running joke that is Light Peak/Thunderbolt, you know that proprietary optical cables aren’t cheap and tend to never go down in price. Intel knows this is a problem for datacenters and is working to change that this time around, it is opening up the cables to anyone that wants to make them.

In theory competition will drive the price down or at least prevent vendors from fleecing users if they can buy them on the open marker. While we don’t foresee the same level of competition as USB devices, we think this is a good idea that will help with a potential impediment to adoption.

So in the end you can now see the overarching method to Intel’s madness, or at least the framework for it. SSF starts with the hardware, moves on to the interconnect, then ties it all together with software tools, all meant to work together. If the VARs are up to speed and can really live up to the value in their acronym, this package should help make HPC easier for everyone. At the very least you will be armed with a better set of questions to ask about when you go to their HPC developer workshop before SC15.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024